Retrieval-Augmented Generation (RAG) is changing how modern AI systems deliver answers. Instead of relying only on pre-trained knowledge, RAG connects large language models (LLMs) to real-time, external data sources. This simple shift makes AI responses more accurate, more current, and far more useful in real-world scenarios.

- What is Retrieval-Augmented Generation (RAG)?

- What Exactly Are RAG Systems?

- Most Popular RAG Architecture

- How Retrieval-Augmented Generation (RAG) Works

- Step 1: Build a Knowledge Base

- Step 2: Convert Data into Vectors

- Step 3: Retrieve Relevant Information

- Step 4: Augment the Prompt

- Step 5: Generate Output

- Key Benefits of Retrieval-Augmented (RAG) Generation

- Cost-Effective AI Scaling

- Real-Time and Domain-Specific Data

- Higher Trust with Source Attribution

- Better Developer Control

- Continuous Updates

- Retrieval-Augmented Generation (RAG) Use Cases

- AI Chatbots and Customer Support

- Market Research and Analysis

- Internal Knowledge Systems

- Recommendation Systems

- Retrieval-Augmented Generation vs Fine-Tuning

- Retrieval-Augmented Generation vs Semantic Search

- Challenges of Retrieval-Augmented Generation

- Future of Retrieval-Augmented Generation

- You Can Also Check These Helpful External Resources on RAG

- FAQs on Retrieval-Augmented Generation (RAG)

- What is Retrieval-Augmented Generation in simple terms?

- How is RAG different from ChatGPT?

- Does RAG eliminate hallucinations completely?

- Is RAG expensive to implement?

- Can small businesses use RAG?

- Why is RAG important for modern AI applications?

- Final Thoughts on Retrieval-Augmented Generation

If you’ve ever felt that AI tools sometimes sound confident but slightly wrong, you’re not imagining things. Traditional models often behave like that one coworker who always has an answer, even when they shouldn’t. RAG fixes that problem by giving AI access to verified information before it responds.

What is Retrieval-Augmented Generation (RAG)?

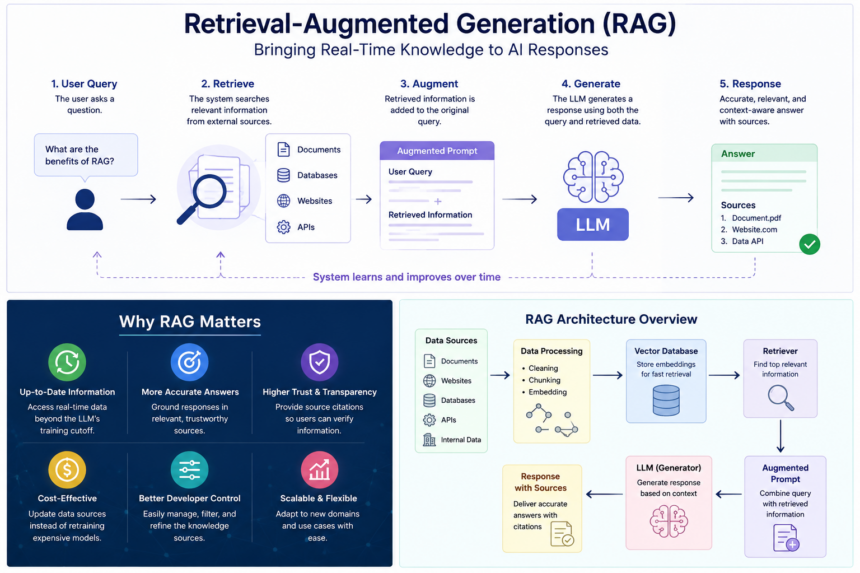

Retrieval-Augmented Generation (RAG) is an AI architecture that improves output quality by retrieving relevant data from external sources and combining it with a user query before generating a response.

In simple terms, RAG works like this:

- It searches for relevant information

- It adds that information to the prompt

- It generates a better, context-aware answer

Unlike standard generative AI models, RAG does not depend only on training data. It actively pulls fresh and domain-specific information from knowledge bases, APIs, or internal documents.

Why Retrieval-Augmented Generation is So Popular

Retrieval-Augmented Generation is gaining massive traction in 2026, and there are strong reasons behind it.

Solves Real AI Problems

RAG tackles outdated knowledge, hallucinations, and generic responses. Businesses need reliable AI, not creative guesswork.

Cost Efficiency

Companies avoid expensive model retraining. Instead, they update data sources and instantly improve output.

Real-Time Intelligence

RAG connects to live data like APIs, news, and internal systems. This keeps AI relevant and useful.

Better Trust and Transparency

RAG systems can provide source-backed answers. Users trust AI more when they can verify information.

What Exactly Are RAG Systems?

RAG systems are complete frameworks that combine retrieval mechanisms with generative AI models.

A typical RAG system includes:

- A knowledge base with structured and unstructured data

- A retriever that finds relevant information

- A generator that produces responses

- A pipeline that connects everything together

Think of RAG systems as smart assistants that do research before answering questions.

Entire RAG Pipeline Explained

The Retrieval-Augmented Generation pipeline follows a structured process:

1. Data Collection

Gather data from documents, APIs, databases, and websites.

2. Data Processing and Chunking

Break large content into smaller pieces to improve retrieval accuracy.

3. Embedding and Vector Storage

Convert text into vectors and store them in a vector database.

4. Query Processing

Convert user input into vector format.

5. Retrieval Step

Search the vector database using semantic similarity.

6. Prompt Augmentation

Combine retrieved data with the original query.

7. Response Generation

The LLM generates a final answer using enriched context.

This pipeline ensures that answers are grounded in real data, not just predictions.

Most Popular RAG Architecture

Modern Retrieval-Augmented Generation systems follow a modular architecture.

Core Components

- Knowledge Base: Stores all external data

- Retriever: Fetches relevant information

- Ranker (optional): Prioritizes best results

- Generator (LLM): Produces final output

Advanced Architectures

- Naive RAG: Basic retrieval + generation

- Advanced RAG: Includes reranking and filtering

- Hybrid RAG: Combines keyword search and semantic search

- Agentic RAG: Uses AI agents to decide what to retrieve

Hybrid and agent-based architectures are becoming popular because they improve accuracy and decision-making.

How Retrieval-Augmented Generation (RAG) Works

Understanding how Retrieval-Augmented Generation works helps explain its performance advantage.

Step 1: Build a Knowledge Base

Data comes from multiple sources like documents, APIs, and internal systems.

Step 2: Convert Data into Vectors

Text is transformed into embeddings and stored in vector databases.

Step 3: Retrieve Relevant Information

The system finds the most relevant data using semantic search.

Step 4: Augment the Prompt

Retrieved data is added to the user query.

Step 5: Generate Output

The LLM creates a response using both training data and retrieved context.

Key Benefits of Retrieval-Augmented (RAG) Generation

Cost-Effective AI Scaling

No need to retrain models frequently. Update data instead.

Real-Time and Domain-Specific Data

Access to live and industry-specific information.

Higher Trust with Source Attribution

Users can verify answers using cited sources.

Better Developer Control

Developers can adjust data sources easily.

Continuous Updates

Systems improve without retraining cycles.

Retrieval-Augmented Generation (RAG) Use Cases

AI Chatbots and Customer Support

RAG powers intelligent chatbots that provide accurate and real-time answers.

Market Research and Analysis

Businesses use RAG to analyze trends, competitors, and customer behavior.

Internal Knowledge Systems

Employees can access company data instantly, improving productivity.

Recommendation Systems

Platforms use RAG to suggest products or content based on user behavior.

Retrieval-Augmented Generation vs Fine-Tuning

RAG:

- Uses external data

- Updates instantly

- Lower cost

Fine-Tuning:

- Modifies the model

- Requires retraining

- Higher cost

Both approaches can work together for better performance.

Retrieval-Augmented Generation vs Semantic Search

Semantic search improves RAG by finding meaning instead of keywords.

- Semantic Search finds relevant data

- RAG uses that data to generate answers

Together, they create powerful AI systems.

Challenges of Retrieval-Augmented Generation

Data Quality Issues

Poor data leads to poor answers.

System Complexity

Requires vector databases and pipelines.

Security Risks

Sensitive data must be protected carefully.

Future of Retrieval-Augmented Generation

RAG is moving beyond answering questions. Future systems will take actions based on context.

Examples include:

- Booking services automatically

- Making recommendations

- Automating workflows

This shift is closely tied to the rise of AI agents.

You Can Also Check These Helpful External Resources on RAG

FAQs on Retrieval-Augmented Generation (RAG)

What is Retrieval-Augmented Generation in simple terms?

Retrieval-Augmented Generation is a method that improves AI answers by combining real-time data with language models.

How is RAG different from ChatGPT?

RAG is a system design, while ChatGPT is a model. RAG can enhance models like ChatGPT with external data.

Does RAG eliminate hallucinations completely?

No, but it significantly reduces them by grounding responses in real data.

Is RAG expensive to implement?

It is more cost-effective than retraining models, but it requires setup like vector databases and pipelines.

Can small businesses use RAG?

Yes, many tools and cloud platforms make RAG accessible without heavy infrastructure.

Why is RAG important for modern AI applications?

RAG ensures AI systems provide accurate, current, and context-aware responses, which is critical for real-world use.

Final Thoughts on Retrieval-Augmented Generation

Retrieval-Augmented Generation is one of the most important advancements in modern AI. It bridges the gap between static knowledge and real-time intelligence.

If you want AI that delivers accurate, context-aware, and trustworthy responses, RAG is becoming the standard. And honestly, an AI that checks facts before speaking already sounds smarter than most humans on the internet.

Sandeep Kumar is the Founder & CEO of Aitude, a leading AI tools, research, and tutorial platform dedicated to empowering learners, researchers, and innovators. Under his leadership, Aitude has become a go-to resource for those seeking the latest in artificial intelligence, machine learning, computer vision, and development strategies.