The AI landscape is evolving rapidly, but here’s what most developers miss: not all intelligent agents are created equal. While simple reflex agents follow rigid rules, advanced agents can plan multi-step strategies, optimize competing objectives, and learn from experience and capabilities that separate basic automation from truly intelligent systems.

- Understanding Advanced Agent Architectures

- Goal-Based Agents in Artificial Intelligence

- How Goal-Based Agents Work

- Key Characteristics of Goal-Based Agents

- Real-World Goal-Based Agent Examples

- Limitations of Goal-Based Agents

- Utility-Based Agents: Optimizing Beyond Goals

- Understanding Utility Functions

- Key Characteristics of Utility-Based Agents

- Utility-Based Agent Example: Dynamic Pricing System

- More Utility-Based Agent Examples

- Challenges in Utility-Based Design

- Learning Agents: Adaptive Intelligence

- Learning Agent Architecture

- How Learning Agents Improve Over Time

- Types of Learning in Intelligent Agents

- Real-World Learning Agent Examples

- Building Intelligent Agents: Practical Implementation

- Step 1: Define Your Agent’s Purpose and Scope

- Step 2: Choose the Right Agent Architecture

- Step 3: Design Your Agent’s Core Components

- Step 5: Build in Safety and Control Mechanisms

- Advanced Implementation Patterns

- Common Pitfalls and How to Avoid Them

- Pitfall 1: Overengineering Early Builds

- Pitfall 2: Unclear Business Objectives

- Pitfall 3: Poor Data Foundations

- Pitfall 4: Ignoring Reasoning and Orchestration

- Pitfall 5: Weak Governance and Oversight

- Tools and Frameworks for Building Intelligent Agents

- Real-World Success Stories

- The Future of Intelligent Agents

- Conclusion

Understanding goal based agent in artificial intelligence and their more sophisticated cousins and utility-based and learning agents, is no longer optional for developers building production AI systems. These architectures power everything from autonomous vehicles navigating complex traffic to recommendation engines balancing user satisfaction with business metrics.

The numbers tell the story: 79% of organizations already employ AI agents in some capacity, and executives anticipate adoption rates will continue climbing as these systems generate quantifiable ROI. Yet over 40% of agentic AI initiatives are expected to be abandoned during 2027, largely driven by cost and uncertainty around business value.

The difference between success and failure? Understanding which agent architecture fits your problem, how to implement it correctly, and when to combine multiple approaches for maximum impact.

This comprehensive guide takes you beyond theory into practical implementation. You’ll learn how goal-based agents plan toward objectives, how utility-based agents optimize trade-offs, how learning agents improve through experience, and most importantly and how to build these systems yourself with real code examples and production-ready patterns.

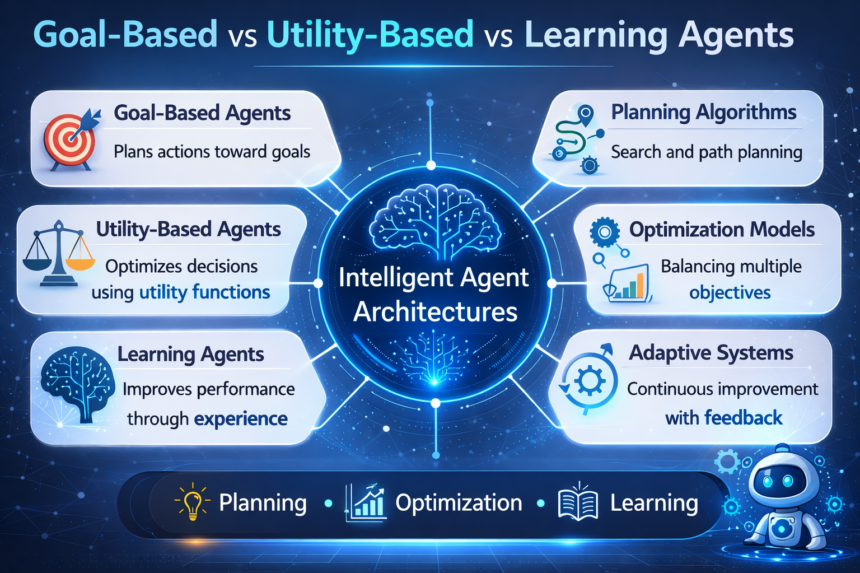

Understanding Advanced Agent Architectures

Before diving into implementation, let’s establish a clear mental model. Advanced intelligent agents exist on a spectrum of sophistication:

Simple Reflex Agents operate on condition-action rules without memory or planning. They’re fast and predictable but inflexible.

Model-Based Agents maintain internal state, tracking how the world changes based on their actions. They handle partial observability better but still lack strategic thinking.

Goal-Based Agents introduce planning and future-oriented reasoning. They evaluate actions based on whether they move toward desired outcomes.

Utility-Based Agents refine goal-based reasoning by assigning numerical value to outcomes, enabling optimization across competing objectives.

Learning Agents add adaptability, improving performance through experience and feedback.

The key insight: these aren’t mutually exclusive categories. Production systems often combine multiple approaches and using reflex behaviour for safety constraints, goal-based planning for task execution, utility functions for optimization, and learning for continuous improvement.

If you want to explore the fundamentals first, check out our complete guide on Types of Intelligent Agents in Artificial Intelligence here.

Goal-Based Agents in Artificial Intelligence

A goal based agent in artificial intelligence represents a fundamental shift from reactive to proactive behaviour. Instead of simply responding to stimuli, these agents maintain explicit representations of desired states and systematically work toward achieving them.

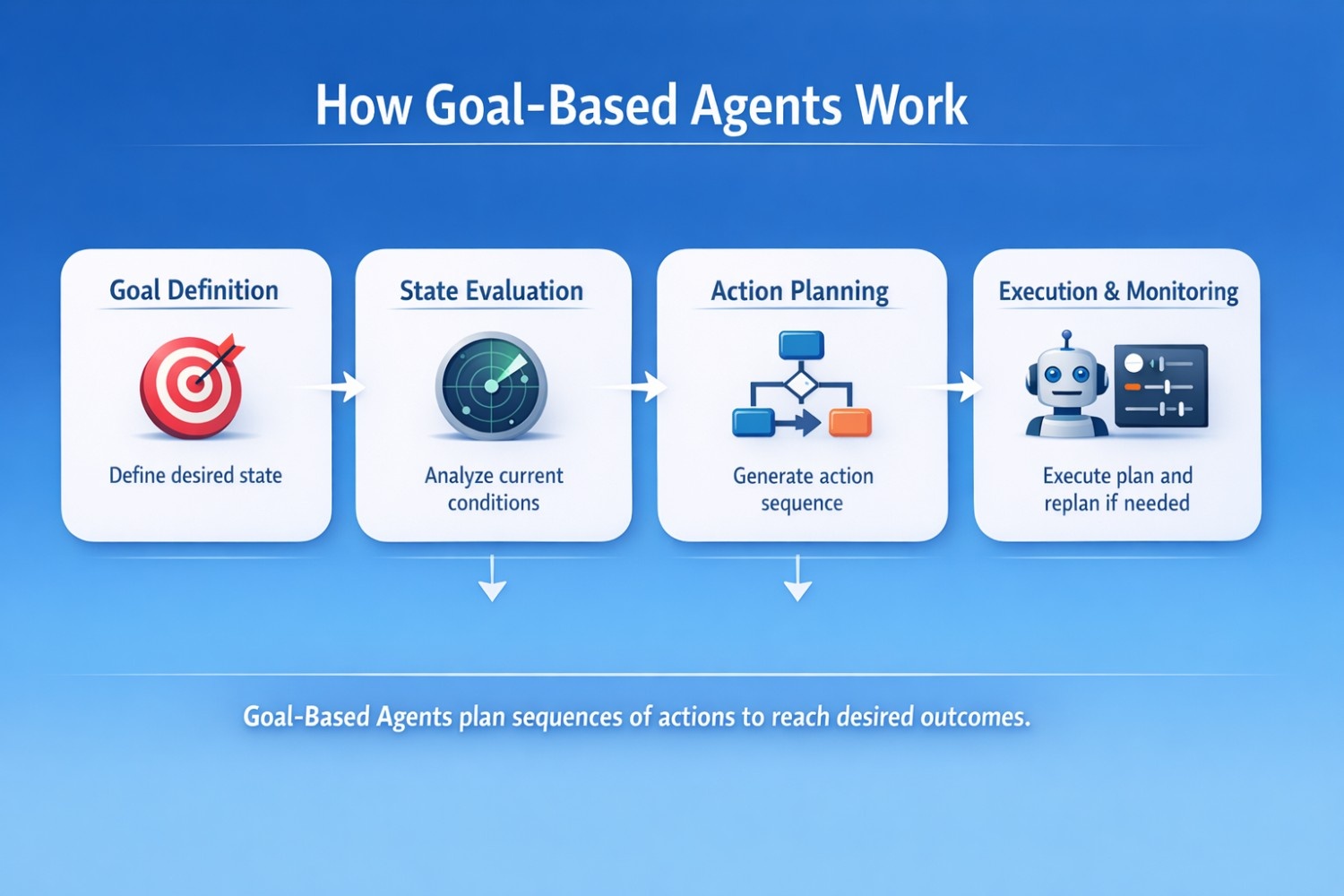

How Goal-Based Agents Work

Goal-based agents operate through a four-stage process:

- Goal Definition– The agent maintains clear, measurable objectives. For a delivery robot, the goal might be “reach building B, room 305” rather than just “move forward.”

- State Evaluation– The agent assesses its current state relative to the goal. How far away is the target? What obstacles exist? What resources are available?

- Action Planning– Using search algorithms (A*, Dijkstra’s, or more sophisticated planners), the agent generates sequences of actions that lead from the current state to the goal state.

- Execution & Monitoring– The agent executes the plan while continuously monitoring progress. If obstacles arise or conditions change, it replans dynamically.

Key Characteristics of Goal-Based Agents

Future-Oriented Reasoning– Unlike reflex agents that only consider current inputs, goal-based agents evaluate how actions affect future states. They ask “Will this action bring me closer to my goal?”

Flexibility– Multiple paths can lead to the same goal. A navigation agent might choose different routes based on traffic, weather, or time constraints and all valid as long as they reach the destination.

Adaptability– When plans fail, goal-based agents can generate alternatives. If a road is blocked, the agent replans rather than getting stuck.

Binary Success Metric– Goal-based agents treat success as binary: either the goal is achieved or it isn’t. This simplicity makes them easier to design but limits their ability to handle trade-offs.

Real-World Goal-Based Agent Examples

Autonomous Navigation Systems

A self-driving car’s route planner is fundamentally goal-based. Given a destination, it generates a path considering road networks, traffic rules, and current location. The goal is clear: reach the destination safely and legally.

Project Management Agents

AI systems that coordinate complex projects operate as goal-based agents. They break down high-level objectives (launch product by Q3) into subtasks, assign resources, and monitor progress toward milestones.

Robotic Process Automation

RPA bots that complete multi-step workflows and like processing loan applications and use goal-based reasoning. The goal is “application approved or rejected with complete documentation,” and the agent plans the sequence of validation, verification, and decision steps needed.

Strategic Game Playing

Chess engines and Go players are sophisticated goal-based agents. The goal is “checkmate the opponent” or “control more territory,” and the agent plans move sequences that maximize the probability of achieving that goal.

Limitations of Goal-Based Agents

While powerful, goal-based agents have important constraints:

No Trade-Off Mechanism– If a robot must reach a destination but also minimize battery usage, a pure goal-based agent has no framework for balancing these concerns. It only cares about reaching the goal.

Computational Complexity– Planning in large state spaces is computationally expensive. Real-time applications may require heuristics or approximations.

Goal Specification Challenge– Poorly defined goals lead to unexpected behaviour. The classic example: an agent told to “maximize paperclip production” might convert all available resources into paperclips, ignoring other important considerations.

Environmental Assumptions– Plans depend on assumptions about how the world works. When those assumptions break, plans fail.

Utility-Based Agents: Optimizing Beyond Goals

Utility-based agents address the fundamental limitation of goal-based systems: the inability to handle trade-offs and optimize for quality rather than just success.

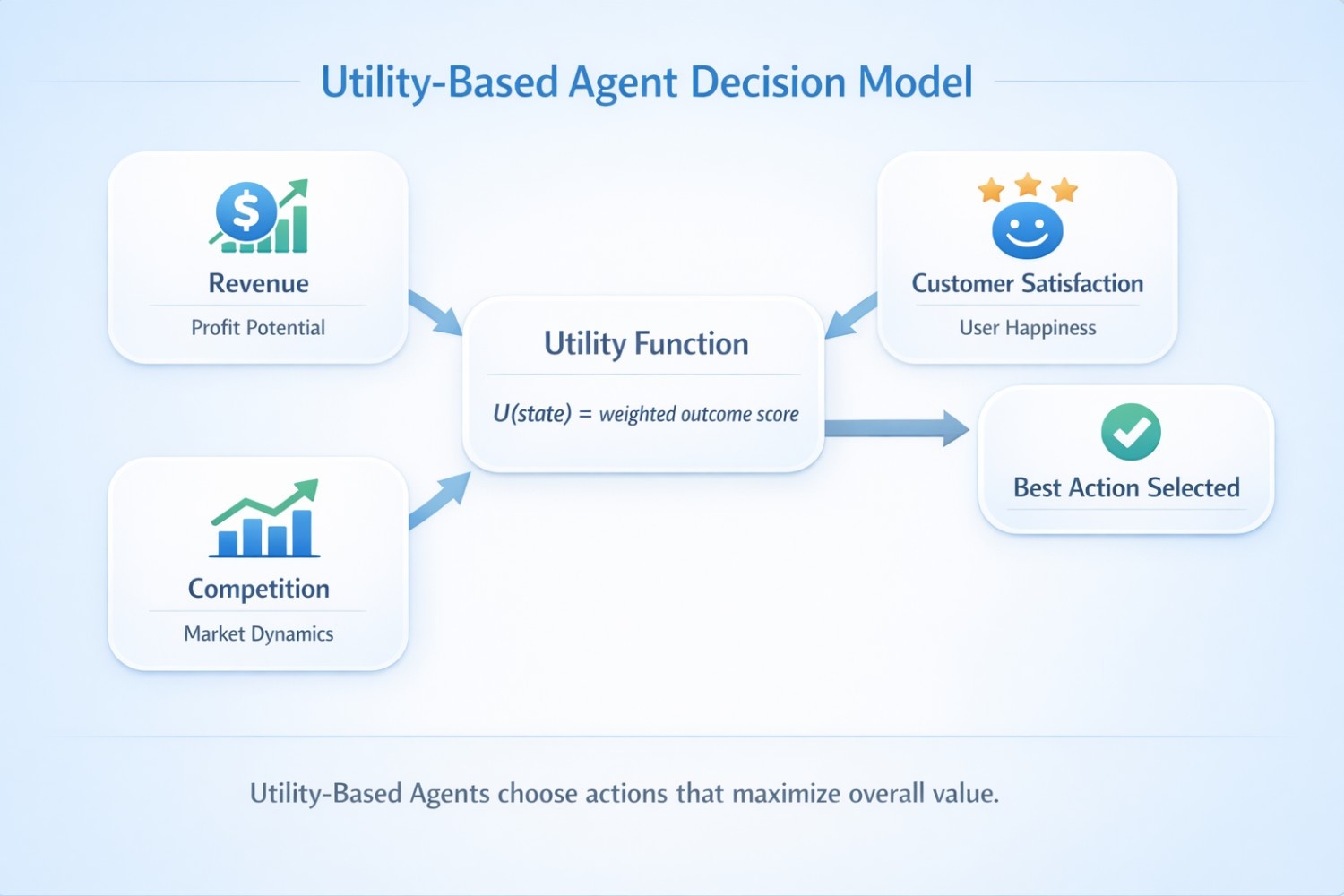

Understanding Utility Functions

A utility function assigns a numerical value to each possible outcome, representing its desirability. Instead of asking “Does this achieve my goal?” utility-based agents ask “How good is this outcome compared to alternatives?”

Mathematical Foundation:

- U(state) is the utility value

- features are measurable aspects of the outcome

- weights (w) represent relative importance

The agent selects actions that maximize expected utility across all possible outcomes.

Key Characteristics of Utility-Based Agents

Explicit Trade-Off Management– Utility functions make priorities transparent. A delivery drone might balance speed (0.4 weight), battery efficiency (0.3 weight), and safety (0.3 weight) explicitly.

Graded Success– Unlike binary goal achievement, utility-based agents recognize that some outcomes are better than others. Delivering a package in 20 minutes is better than 30 minutes, even if both “achieve the goal.”

Multi-Objective Optimization– These agents naturally handle competing objectives by encoding them in the utility function. An e-commerce recommendation system might balance user preferences, profit margins, and inventory levels simultaneously.

Transparency– Well-designed utility functions expose decision logic that would otherwise be hidden in heuristics or learned behaviors.

Utility-Based Agent Example: Dynamic Pricing System

Let’s examine a real-world utility based agent example in e-commerce:

Problem: Set product prices dynamically to maximize revenue while maintaining customer satisfaction and competitive positioning.

Utility Function Design:

def calculate_pricing_utility(price, product, market_data):

# Revenue component

expected_sales = predict_sales_volume(price, product.demand_elasticity)

revenue = price * expected_sales

# Customer satisfaction component

perceived_value = product.quality_score / price

satisfaction_score = min(perceived_value / product.reference_price, 1.0)

# Competitive positioning component

competitor_avg = market_data.get_competitor_average(product.category)

competitive_score = 1.0 - abs(price - competitor_avg) / competitor_avg

# Inventory pressure component

inventory_ratio = product.current_stock / product.optimal_stock

inventory_score = 1.0 if inventory_ratio < 1.0 else 1.0 / inventory_ratio

# Weighted utility calculation

utility = (

0.40 * revenue +

0.25 * satisfaction_score +

0.20 * competitive_score +

0.15 * inventory_score

)

return utility

This utility function explicitly balances four competing objectives. The agent tests different price points and selects the one with highest expected utility.

More Utility-Based Agent Examples

Portfolio Management Systems

Financial agents optimize investment portfolios by balancing expected returns, risk exposure, liquidity needs, and diversification requirements. The utility function encodes investor preferences and constraints.

Resource Allocation in Cloud Computing

Cloud orchestration systems allocate computing resources by balancing performance requirements, cost constraints, energy efficiency, and service level agreements. Utility functions help make optimal allocation decisions across thousands of servers.

Healthcare Treatment Planning

Medical decision support systems evaluate treatment options by considering efficacy, side effects, cost, patient preferences, and long-term outcomes. Utility-based reasoning helps clinicians make informed trade-offs.

Smart Grid Energy Management

Energy distribution systems optimize power allocation by balancing demand, supply availability, cost, environmental impact, and grid stability. Utility functions enable real-time optimization across these competing factors.

Challenges in Utility-Based Design

Utility Function Specification– Defining accurate utility functions requires deep domain knowledge. Poorly specified utilities lead to technically optimal but practically undesirable behaviour.

Weight Calibration– Determining appropriate weights for different objectives is often more art than science. Small changes can dramatically affect behaviour.

Computational Cost– Evaluating utility across many possible actions and outcomes can be computationally expensive, especially in continuous or high-dimensional spaces.

Changing Priorities– Business priorities shift over time. Utility functions must be updated to reflect new objectives, requiring ongoing maintenance.

Learning Agents: Adaptive Intelligence

Learning agents represent the pinnacle of agent sophistication and systems that improve their performance through experience without explicit reprogramming.

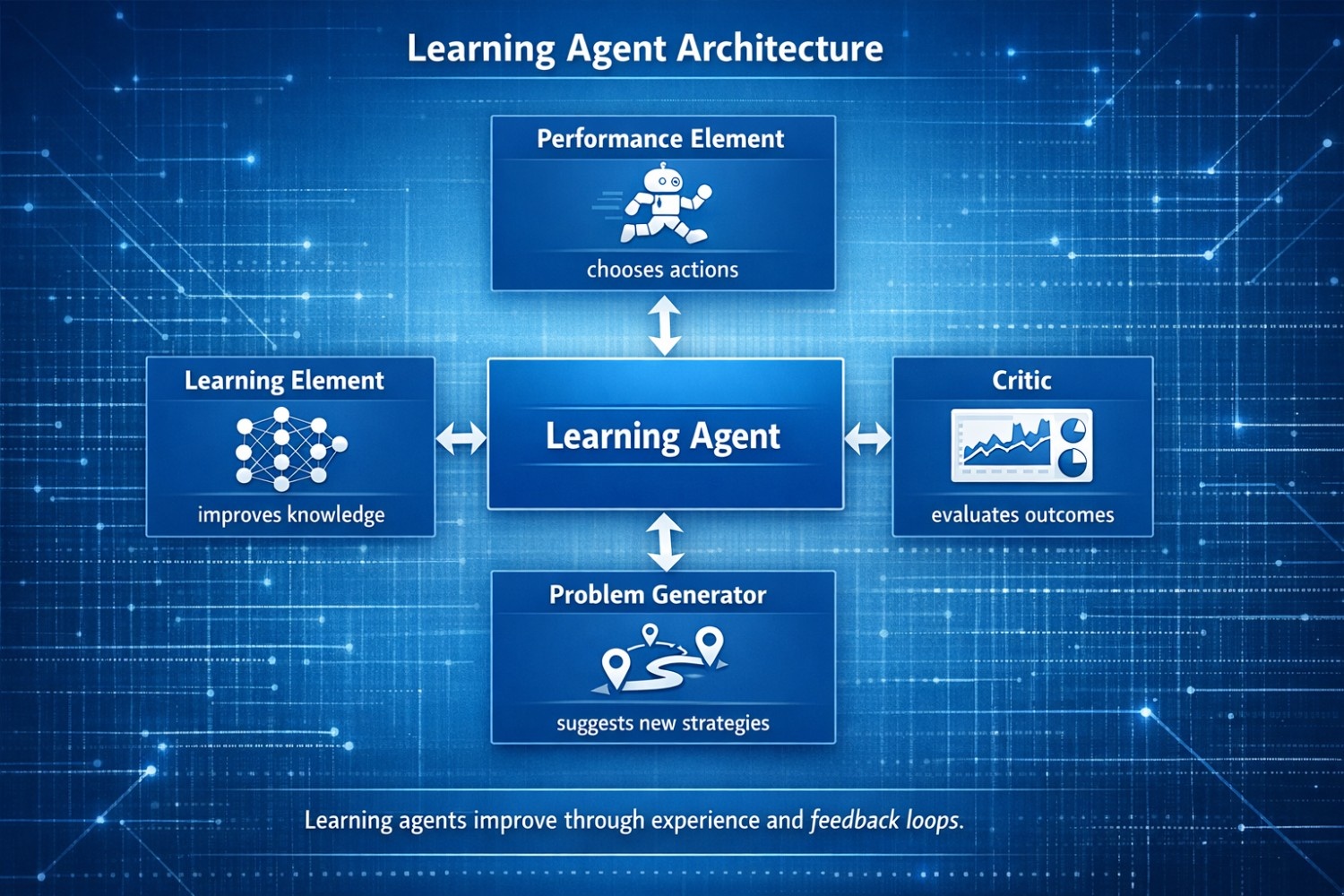

Learning Agent Architecture

The learning agent architecture consists of four interconnected components that work together to enable continuous improvement:

- Performance Element (The Actor)

This component selects actions based on current knowledge. It’s the “doing” part of the agent and the module that actually interacts with the environment and executes decisions.

In a customer service agent, the performance element decides which response to generate, which knowledge base article to retrieve, or when to escalate to a human.

- Learning Element (The Improver)

This component analyzes feedback and updates the agent’s knowledge to improve future performance. It implements the actual learning algorithms and supervised learning, reinforcement learning, or unsupervised pattern discovery.

The learning element might update response templates based on customer satisfaction scores, refine classification models based on labelled examples, or adjust policies based on reward signals.

- Critic (The Evaluator)

The critic provides feedback on the agent’s performance by comparing actual outcomes against expected or desired outcomes. It generates the learning signal that drives improvement.

In a recommendation system, the critic might measure click-through rates, conversion rates, and user engagement to evaluate recommendation quality.

- Problem Generator (The Explorer)

This component suggests exploratory actions that help the agent discover new strategies and avoid getting stuck in local optima. It balances exploitation (using known good strategies) with exploration (trying new approaches).

An advertising agent might occasionally show unconventional ad placements to discover whether they perform better than current strategies.

How Learning Agents Improve Over Time

Learning agents follow a continuous improvement cycle:

- PERFORM → Execute action based on current knowledge

2. OBSERVE → Monitor outcome and environmental response

3. EVALUATE → Critic assesses performance quality

4. LEARN → Update knowledge based on feedback

5. EXPLORE → Problem generator suggests new strategies

6. REPEAT → Cycle continues indefinitelyThis cycle enables agents to adapt to changing conditions, discover better strategies, and handle situations they weren’t explicitly programmed for.

Types of Learning in Intelligent Agents

Supervised Learning

The agent learns from labelled examples where correct outputs are provided. A spam filter learns from emails labelled as “spam” or “not spam,” gradually improving its classification accuracy.

Use cases: Classification, regression, pattern recognition

Reinforcement Learning

The agent learns through trial and error, receiving rewards or penalties based on action outcomes. This is particularly powerful for sequential decision-making where the consequences of actions unfold over time.

Use cases: Game playing, robotic control, autonomous navigation, resource optimization

Unsupervised Learning

The agent discovers hidden patterns and structures in data without explicit labels. Customer segmentation agents identify natural groupings in user behaviour without being told what segments should exist.

Use cases: Clustering, anomaly detection, dimensionality reduction

Transfer Learning

The agent applies knowledge learned in one domain to related domains, accelerating learning in new situations. A vision system trained on general images can be fine-tuned for medical imaging with less data.

Use cases: Domain adaptation, few-shot learning, cross-domain applications

Real-World Learning Agent Examples

Recommendation Engines

Netflix, Spotify, and Amazon use learning agents that continuously improve recommendations based on user interactions. They learn which content appeals to which users, adapting to changing preferences over time.

The learning element updates recommendation models based on what users watch, skip, or rate. The critic evaluates recommendation quality through engagement metrics. The problem generator occasionally suggests unexpected content to discover new preferences.

Fraud Detection Systems

Financial institutions deploy learning agents that adapt to evolving fraud patterns. As criminals develop new tactics, the agent learns to recognize them without explicit programming.

These systems use supervised learning on labeled fraud cases, unsupervised learning to detect anomalies, and reinforcement learning to optimize investigation resource allocation.

Predictive Maintenance

Manufacturing facilities use learning agents that monitor equipment and predict failures before they occur. The agents learn normal operating patterns and detect deviations that signal impending problems.

Over time, these agents improve their predictions by learning which sensor patterns actually precede failures versus false alarms.

Personalized Medicine

Healthcare systems deploy learning agents that recommend treatments based on patient characteristics, learning from outcomes across thousands of cases. They discover which treatments work best for which patient profiles.

Building Intelligent Agents: Practical Implementation

Now let’s move from theory to practice. Here’s how to implement these agent architectures in production systems.

Step 1: Define Your Agent’s Purpose and Scope

The most common mistake in agent implementation in AI is starting with technology rather than problems. Before writing code, answer these questions:

What specific problem are you solving? “Improve customer service” is too vague. “Automatically route support tickets to the right department with 90% accuracy” is specific.

Who are your users? Understanding user workflows, pain points, and expectations shapes agent design.

What’s the deliverable? Define concrete outputs and a decision, a recommendation, a completed task, a report.

What are the boundaries? Explicitly state what the agent will NOT do. Clear limitations prevent scope creep and set appropriate user expectations.

Step 2: Choose the Right Agent Architecture

Use this decision framework:

Choose Goal-Based Agents When:

- Success is binary (goal achieved or not)

- The path to the goal can vary

- You need planning and multi-step reasoning

- Trade-offs between competing objectives aren’t critical

- Example: Route planning, task scheduling, workflow automation

Choose Utility-Based Agents When:

- Multiple competing objectives must be balanced

- Outcomes have varying degrees of quality

- Trade-offs must be explicit and tunable

- Optimization matters more than binary success

- Example: Resource allocation, pricing systems, portfolio management

Choose Learning Agents When:

- Patterns are too complex to program explicitly

- The environment changes over time

- You have access to feedback data

- Adaptability is more valuable than predictability

- Example: Recommendation systems, fraud detection, predictive maintenance

Combine Multiple Approaches When:

- Complex problems require different capabilities

- Safety constraints need reflex behaviour

- Strategic planning needs goal-based reasoning

- Optimization needs utility functions

- Adaptation needs learning

- Example: Autonomous vehicles, enterprise automation platforms

Step 3: Design Your Agent’s Core Components

class GoalBasedAgent:

def __init__(self, goal_state):

self.goal_state = goal_state

self.current_state = None

self.plan = []

def perceive(self, environment):

"""Update current state from environment"""

self.current_state = environment.get_state()

def is_goal_achieved(self):

"""Check if goal state is reached"""

return self.current_state == self.goal_state

def plan_actions(self):

"""Generate action sequence to reach goal"""

# Use A* or another planning algorithm

self.plan = self.search_algorithm(

start=self.current_state,

goal=self.goal_state,

environment=self.environment

)

def execute_next_action(self):

"""Execute next step in plan"""

if not self.plan:

self.plan_actions()

if self.plan:

action = self.plan.pop(0)

return action

return None # No valid plan found

def act(self, environment):

"""Main agent loop"""

self.perceive(environment)

if self.is_goal_achieved():

return "GOAL_ACHIEVED"

action = self.execute_next_action()

if action:

environment.execute(action)

return action

else:

return "PLANNING_FAILED"

For Utility-Based Agents:

class UtilityBasedAgent:

def __init__(self, utility_function, action_space):

self.utility_function = utility_function

self.action_space = action_space

self.current_state = None

def perceive(self, environment):

"""Gather current state information"""

self.current_state = environment.get_state()

def evaluate_action(self, action):

"""Calculate expected utility for an action"""

# Predict resulting state

predicted_state = self.predict_outcome(

self.current_state,

action

)

# Calculate utility of predicted state

utility = self.utility_function(predicted_state)

return utility

def select_best_action(self):

"""Choose action with highest expected utility"""

best_action = None

best_utility = float('-inf')

for action in self.action_space:

utility = self.evaluate_action(action)

if utility > best_utility:

best_utility = utility

best_action = action

return best_action, best_utility

def act(self, environment):

"""Main agent loop"""

self.perceive(environment)

action, utility = self.select_best_action()

if action:

environment.execute(action)

return {

'action': action,

'expected_utility': utility

}

return None

For Learning Agents:

class LearningAgent:

def __init__(self, learning_rate=0.1, exploration_rate=0.2):

self.learning_rate = learning_rate

self.exploration_rate = exploration_rate

# Four key components

self.performance_element = PerformanceElement()

self.learning_element = LearningElement()

self.critic = Critic()

self.problem_generator = ProblemGenerator()

self.experience_buffer = []

def perceive(self, environment):

"""Gather environmental information"""

return environment.get_state()

def select_action(self, state):

"""Performance element chooses action"""

# Exploration vs exploitation

if random.random() < self.exploration_rate: # Explore: try suggested exploratory action action = self.problem_generator.suggest_action(state) else: # Exploit: use current best knowledge action = self.performance_element.select_action(state) return action def execute_and_learn(self, environment): """Main learning loop""" # Perceive current state state = self.perceive(environment) # Select and execute action action = self.select_action(state) environment.execute(action) # Observe outcome next_state = self.perceive(environment) reward = environment.get_reward() # Critic evaluates performance feedback = self.critic.evaluate( state, action, next_state, reward ) # Store experience self.experience_buffer.append({ 'state': state, 'action': action, 'next_state': next_state, 'reward': reward, 'feedback': feedback }) # Learning element updates knowledge if len(self.experience_buffer) >= 32: # Batch learning

self.learning_element.update(

self.experience_buffer,

self.performance_element

)

self.experience_buffer = []

return action, reward

Step 4: Implement Robust Evaluation and Monitoring Production agents require continuous evaluation. Implement these monitoring systems:

Performance Metrics:

- Task completion rate

- Average time to completion

- Resource utilization

- Error rates and failure modes

- User satisfaction scores

Learning Metrics (for learning agents):

- Learning curve progression

- Convergence stability

- Exploration vs exploitation balance

- Model drift detection

Business Metrics:

- ROI and cost savings

- Revenue impact

- Customer satisfaction

- Operational efficiency gains

Step 5: Build in Safety and Control Mechanisms

Advanced agents need guardrails:

Human-in-the-Loop– For high-stakes decisions, require human approval before execution.

Confidence Thresholds– Only execute actions when confidence exceeds defined thresholds.

Rollback Capabilities– Implement undo mechanisms for reversible actions.

Anomaly Detection– Monitor for unusual behaviour patterns that might indicate problems.

Rate Limiting– Prevent agents from taking too many actions too quickly.

Advanced Implementation Patterns

Hybrid Agent Architecture

The most powerful production systems combine multiple agent types:

class HybridIntelligentAgent:

def __init__(self):

# Safety layer: reflex rules

self.safety_rules = ReflexAgent(safety_constraints)

# Strategic layer: goal-based planning

self.planner = GoalBasedAgent(business_objectives)

# Optimization layer: utility-based decisions

self.optimizer = UtilityBasedAgent(utility_function)

# Adaptation layer: learning from experience

self.learner = LearningAgent()

def act(self, environment):

# First: check safety constraints

if not self.safety_rules.is_safe(environment):

return self.safety_rules.safe_action(environment)

# Second: generate strategic plan

plan = self.planner.generate_plan(environment)

# Third: optimize action selection within plan

action = self.optimizer.select_best_action(

plan.get_valid_actions()

)

# Fourth: learn from outcome

outcome = environment.execute(action)

self.learner.update(action, outcome)

return action

This architecture provides:

- Safety through reflex rules

- Strategic thinking through goal-based planning

- Optimization through utility functions

- Adaptation through learning

Multi-Agent Coordination

Complex problems often require multiple specialized agents working together:

Hierarchical Coordination– A manager agent delegates subtasks to specialist agents, coordinating their activities toward a common goal.

Market-Based Coordination– Agents bid for tasks based on their capabilities and current workload, with a coordinator allocating work to maximize overall utility.

Blackboard Systems– Agents share information through a common knowledge base, with each agent contributing its specialized expertise.

Common Pitfalls and How to Avoid Them

Pitfall 1: Overengineering Early Builds

Problem: Teams try to build fully autonomous, multi-modal agents with perfect memory on day one, spending months perfecting features that don’t align with business priorities.

Solution: Start with a minimal viable agent. Implement core functionality first, then add sophistication based on real-world feedback. A simple goal-based agent that works is better than a complex learning agent that doesn’t.

Pitfall 2: Unclear Business Objectives

Problem: AI agent initiatives start with technology rather than business problems, making ROI hard to quantify and stakeholder buy-in challenging.

Solution: Define specific, measurable business outcomes before implementation. “Reduce support ticket resolution time by 30%” is actionable. “Improve customer service” is not.

Pitfall 3: Poor Data Foundations

Problem: Incomplete, inconsistent, or siloed data leads to poor agent performance. The agent is only as good as the data it receives.

Solution: Invest in data quality, validation, and enrichment before building agents. Implement robust data pipelines and knowledge retrieval architectures.

Pitfall 4: Ignoring Reasoning and Orchestration

Problem: Organizations mistake AI agents for advanced chatbots, building systems that only deliver surface-level responses without deeper intelligence.

Solution: Implement proper reasoning, planning, and orchestration layers. Real enterprise use cases demand integration of rules, tools, and structured decision flows.

Pitfall 5: Weak Governance and Oversight

Problem: Agents operate without proper monitoring, leading to unexpected behavior, security vulnerabilities, and compliance issues.

Solution: Build governance frameworks from the start. Implement logging, auditing, human oversight mechanisms, and clear escalation paths.

Tools and Frameworks for Building Intelligent Agents

Modern agent development leverages specialized frameworks:

LangChain– Highly modular framework for building LLM-powered agents. Ideal for composing complex chains of reasoning and retrieval, though it has a steep learning curve for beginners.

LangGraph– Extends LangChain with directed-graph framework supporting state management and concurrent agent workflows. Perfect for orchestrating complex multi-agent interactions.

Microsoft AutoGen– Streamlined interface for creating chat-based, collaborative agents. Good for rapid prototyping and conversational agent development.

CrewAI– Framework specifically designed for multi-agent collaboration, enabling teams of specialized agents to work together on complex tasks.

Latenode– Visual workflow platform that simplifies modular agent design and state management, making it easier to build and maintain agent systems without deep technical expertise.

To better understand how these frameworks map to different agent architectures, check out our previous guide.

Real-World Success Stories

E-Commerce Dynamic Pricing

Challenge: A retail company needed to optimize pricing across 50,000 products in real-time, balancing revenue, customer satisfaction, and competitive positioning.

Solution: Implemented a utility-based agent with a carefully designed utility function weighing revenue (40%), customer satisfaction (25%), competitive positioning (20%), and inventory pressure (15%).

Results: 18% revenue increase, 12% improvement in customer satisfaction scores, and 25% reduction in overstock situations.

Manufacturing Predictive Maintenance

Challenge: A manufacturing facility experienced unexpected equipment failures causing costly downtime.

Solution: Deployed a learning agent that monitored sensor data, learned normal operating patterns, and predicted failures before they occurred.

Results: 60% reduction in unplanned downtime, 35% decrease in maintenance costs, and improved overall equipment effectiveness (OEE) from 72% to 89%.

Customer Service Automation

Challenge: A telecommunications company handled 100,000+ support tickets monthly, with 40% being routine inquiries that still required human agents.

Solution: Built a hybrid agent combining goal-based planning (for multi-step troubleshooting), utility-based optimization (for routing complex cases), and learning (for continuous improvement).

Results:65% of routine tickets fully automated, 30% reduction in average resolution time, and 22% improvement in customer satisfaction scores.

The Future of Intelligent Agents

The agent landscape is evolving rapidly. Key trends shaping the future:

Agentic AI Market Growth– The market is projected to reach $52.62 billion by 2030 from $7.84 billion in 2025, growing at 46.3% CAGR.

Multi-Agent Ecosystems– Organizations are moving from single-purpose agents to coordinated systems of specialized agents that collaborate on complex workflows.

Improved Reasoning Capabilities– Advances in large language models are enabling more sophisticated planning, reasoning, and decision-making in agent systems.

Better Governance Tools– As agents become more autonomous, governance, observability, and control mechanisms are maturing to ensure safe, reliable operation.

Domain-Specific Agents– The shift from general-purpose to specialized agents grounded in domain knowledge and enterprise data is accelerating.

Conclusion

Understanding and implementing goal based agent in artificial intelligence, utility-based optimization, and learning agent architecture is essential for building production-ready AI systems that deliver real business value.

Goal-based agents excel at planning and multi-step reasoning when success is binary and the path can vary. They’re perfect for navigation, scheduling, and workflow automation.

Utility-based agents optimize across competing objectives by making trade-offs explicit through utility functions. They’re ideal for resource allocation, pricing, and any scenario requiring balanced optimization.

Learning agents adapt and improve through experience, handling complex patterns and changing environments. They power recommendation systems, fraud detection, and predictive maintenance.

The most successful implementations combine these approaches and using reflex behaviour for safety, goal-based planning for strategy, utility functions for optimization, and learning for adaptation.

As you embark on building intelligent agents, remember: start with clear business objectives, choose the right architecture for your problem, implement robust evaluation and monitoring, and build in safety mechanisms from day one. The future belongs to organizations that can effectively deploy intelligent agents that reason, optimize, and learn.

The tools exist, the frameworks are maturing, and the competitive advantages are waiting. What intelligent agent will you build first?

Hi, I’m Pragya.

I write about AI tools, digital trends, and emerging technologies in a way that’s simple, practical, and easy to apply. I enjoy exploring new AI platforms, testing their features, and breaking them down into clear guides that actually help people use them confidently.

My focus is not just on writing content, but on creating value. I believe powerful technology should feel accessible, not overwhelming. That’s why I aim to turn complex tools into actionable insights for creators, marketers, and growing online businesses.

I’m constantly learning, researching, and staying updated with the fast-moving AI space so readers always get relevant and useful information.