AI Can Create Anyone — But How Do We Prove Who’s Real?

Introduction

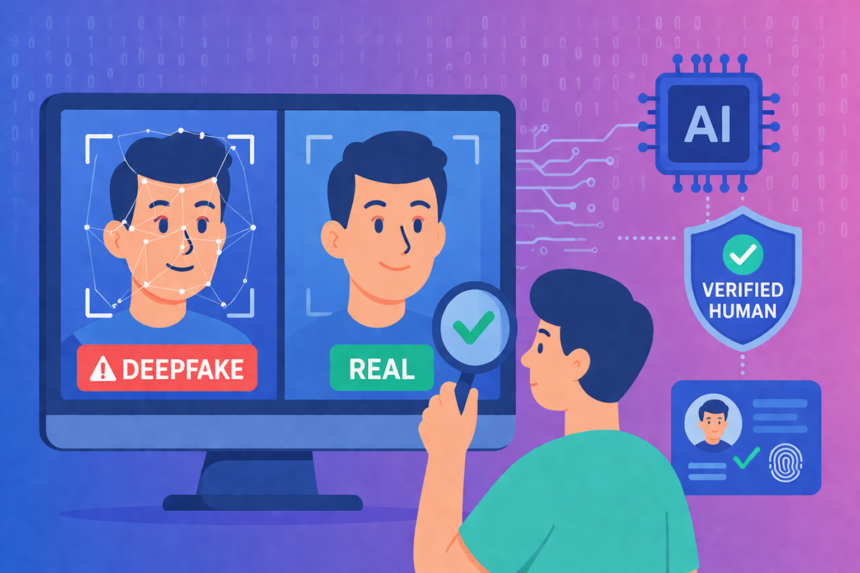

Artificial Intelligence is becoming more powerful and widely used in digital environments. It is now capable of generating images, videos, voices, and even full online identities that appear realistic.

While this brings many benefits, it also creates new challenges. One of the most important challenges is how to determine whether a person or identity is real. As AI generated content increases, the ability to prove authenticity becomes more important for both individuals and automated systems.

“Deepfakes are going to be indistinguishable from reality very soon.”

— Hany Farid (UC Berkeley professor, one of the leading experts in deepfake detection)

What is AI Generated Content?

AI generated content refers to digital media that is created using artificial intelligence systems. These systems are trained on large datasets and can produce realistic outputs such as images, text, audio, and video.

In many cases, AI generated content is useful. It is used in design, marketing, entertainment, and automation. However, the same technology can also be used to create misleading or false representations of people and events.

What are Deepfakes?

Deepfakes are a type of AI generated content that focuses on creating realistic human likenesses. This can include faces, voices, and movements that closely resemble real individuals.

Deepfakes are created using advanced machine learning models. These models can replicate facial expressions, voice patterns, and behavior, making it difficult to distinguish between real and synthetic content.

Because of this, deepfakes are increasingly used in both creative applications and harmful activities such as impersonation and misinformation.

Why This Is a Problem

The rise of AI generated identities introduces challenges for trust and verification. In digital environments, many systems rely on visual or audio input to make decisions. If that input is not reliable, the output of the system may also be affected.

For example, an online platform may receive an image or video that appears legitimate but is actually generated by AI. Without proper verification, the system cannot determine whether the source is real.

This affects not only individuals but also automated workflows, content moderation systems, and data processing pipelines.

What is Deepfake Detection?

Deepfake detection is the process of analysing content to determine whether it has been generated or manipulated using artificial intelligence.

Detection systems use machine learning techniques to identify patterns, inconsistencies, and anomalies in digital media. These systems are designed to work at scale and can process large amounts of content quickly.

Deepfake detection plays an important role in identifying suspicious content and reducing the spread of manipulated media.

Limitations of Detection Systems

Although detection systems are useful, they are not perfect. As AI technology improves, it becomes more difficult to identify synthetic content accurately.

Detection systems may produce false positives, where real content is flagged as fake, or false negatives, where fake content is not detected. This can create uncertainty in automated systems.

Another limitation is that detection focuses only on the content itself. It does not provide information about the identity behind the content. Even if content appears real, there is no confirmation that it came from a verified human source.

What is Human Verification?

Human verification is a method used to confirm that a real person is present during a specific interaction or event. Instead of analysing content, it focuses on verifying identity.

This is often done using biometric technologies such as facial recognition and liveness detection. These methods ensure that the input is coming from a real, live human rather than a static image or generated media.

Human verification provides a different type of assurance compared to detection systems.

How Human Verification Works

Human verification systems typically involve a real time interaction. A user may be asked to complete a short verification process, such as a facial scan or liveness check.

During this process, the system confirms that the individual is physically present and not using pre generated or manipulated content. Once verified, a record of that verification can be created.

Platforms such as PRVEN use this approach to generate a timestamped verification record linked to a real human presence, without storing sensitive biometric data.

Why Both Are Needed

Deepfake detection and human verification serve different purposes. Detection analyses content, while verification confirms identity.

If only detection is used, systems may still process content from unverified or impersonated sources. If only verification is used, systems may still need to analyse content for manipulation.

By combining both approaches, a more complete solution can be achieved. Detection can identify suspicious content, while verification can confirm whether a real person is involved.

This combination helps improve trust, reduce uncertainty, and support more reliable decision making in AI systems and digital platforms.

Conclusion

Artificial Intelligence continues to evolve, and with it, the ability to generate realistic digital identities. This creates new challenges for trust and authenticity.

Deepfake detection remains an important tool, but it does not address every aspect of the problem. Human verification introduces an additional layer that focuses on confirming real human presence.

By using both methods together, systems can better manage the risks associated with AI generated content and create a more reliable digital environment.

Sandeep Kumar is the Founder & CEO of Aitude, a leading AI tools, research, and tutorial platform dedicated to empowering learners, researchers, and innovators. Under his leadership, Aitude has become a go-to resource for those seeking the latest in artificial intelligence, machine learning, computer vision, and development strategies.