Cybersecurity teams face an impossible challenge: analyzing millions of security events daily, identifying genuine threats among countless false positives, and responding faster than attackers can exploit vulnerabilities. Human analysts simply can’t keep pace with the volume and velocity of modern cyber threats.

- What Are Intelligent Agents in Cybersecurity?

- The Architecture of AI Security Automation Agents

- 1. Perception and Data Collection Layer

- 2. Analysis and Threat Detection Engine

- 3. Decision-Making and Risk Assessment Module

- 4. Response and Remediation Layer

- 5. Learning and Adaptation System

- Autonomous Threat Detection AI: Real-World Applications

- 1. Intelligent Intrusion Detection Systems (IDS)

- 2. Endpoint Detection and Response (EDR)

- 3. User and Entity Behaviour Analytics (UEBA)

- 4. Security Orchestration, Automation, and Response (SOAR)

- AI Agents vs Traditional Security Tools: Key Advantages

- 1. Speed and Scale

- 2. Consistency and Reliability

- 3. Handling Complexity

- 4. Adaptive Learning

- 5. Reducing Alert Fatigue

- Types of Security Agents and Their Use Cases

- 1. Reactive Security Agents

- 2. Model-Based Security Agents

- 3. Goal-Based Security Agents

- 4. Utility-Based Security Agents

- 5. Learning Security Agents

- Challenges and Considerations for Implementing Security Agents

- 1. Adversarial Machine Learning

- 2. False Positives and Alert Fatigue

- 3. Explainability and Trust

- 4. Data Privacy and Compliance

- 5. Integration Complexity

- The Future of Intelligent Agents in Cybersecurity

- 1. Autonomous Security Operations Centers (SOCs)

- 2. Predictive Threat Intelligence

- 3. Collaborative Multi-Agent Systems

- 4. Quantum-Resistant Security Agents

- 5. Human-Agent Teaming

- Practical Implementation: Building Security Agents

- Step 1: Define Security Objectives

- Step 2: Assess Your Data Foundation

- Step 3: Choose the Right Agent Architecture

- Step 4: Start with High-Value Use Cases

- Step 5: Implement Human-in-the-Loop Initially

- Step 6: Measure and Iterate

- Conclusion

Enter intelligent agents in cybersecurity and autonomous AI systems that continuously monitor networks, detect anomalies, predict attacks, and respond to threats in real-time. In 2026, these AI-driven security agents aren’t just tools; they’re becoming essential teammates in the fight against increasingly sophisticated cyber adversaries.

Unlike traditional signature-based security tools that only catch known threats, AI agents in security leverage machine learning, behavioural analysis, and autonomous decision-making to identify zero-day exploits, insider threats, and advanced persistent threats (APTs) that would otherwise slip through defences undetected.

Whether you’re building security infrastructure, implementing threat detection systems, or architecting defense-in-depth strategies, understanding how intelligent agents transform cybersecurity operations is critical. This guide explores the architecture, capabilities, and real-world applications of AI security automation agents and with actionable insights for developers and security professionals.

What Are Intelligent Agents in Cybersecurity?

An intelligent agent in cybersecurity is an autonomous software system that perceives security-relevant events in its environment (networks, endpoints, applications), analyzes those events using AI models, makes decisions about potential threats, and takes action to protect systems and all with minimal human intervention.

These agents operate on the same fundamental principles as intelligent agents in other domains , but with specialized capabilities for security contexts:

- Continuous monitoring: 24/7 surveillance of network traffic, user behaviour, system logs, and application activity

- Anomaly detection: Identifying deviations from normal patterns that may indicate threats

- Threat intelligence integration: Correlating local observations with global threat data

- Autonomous response: Taking immediate action to contain threats before damage occurs

- Adaptive learning: Improving detection accuracy by learning from new attack patterns

The key difference between traditional security tools and intelligent agents is autonomy. While conventional systems alert human analysts to investigate, intelligent agents can independently assess situations, prioritize threats, and execute defensive actions and dramatically reducing response times from hours to milliseconds.

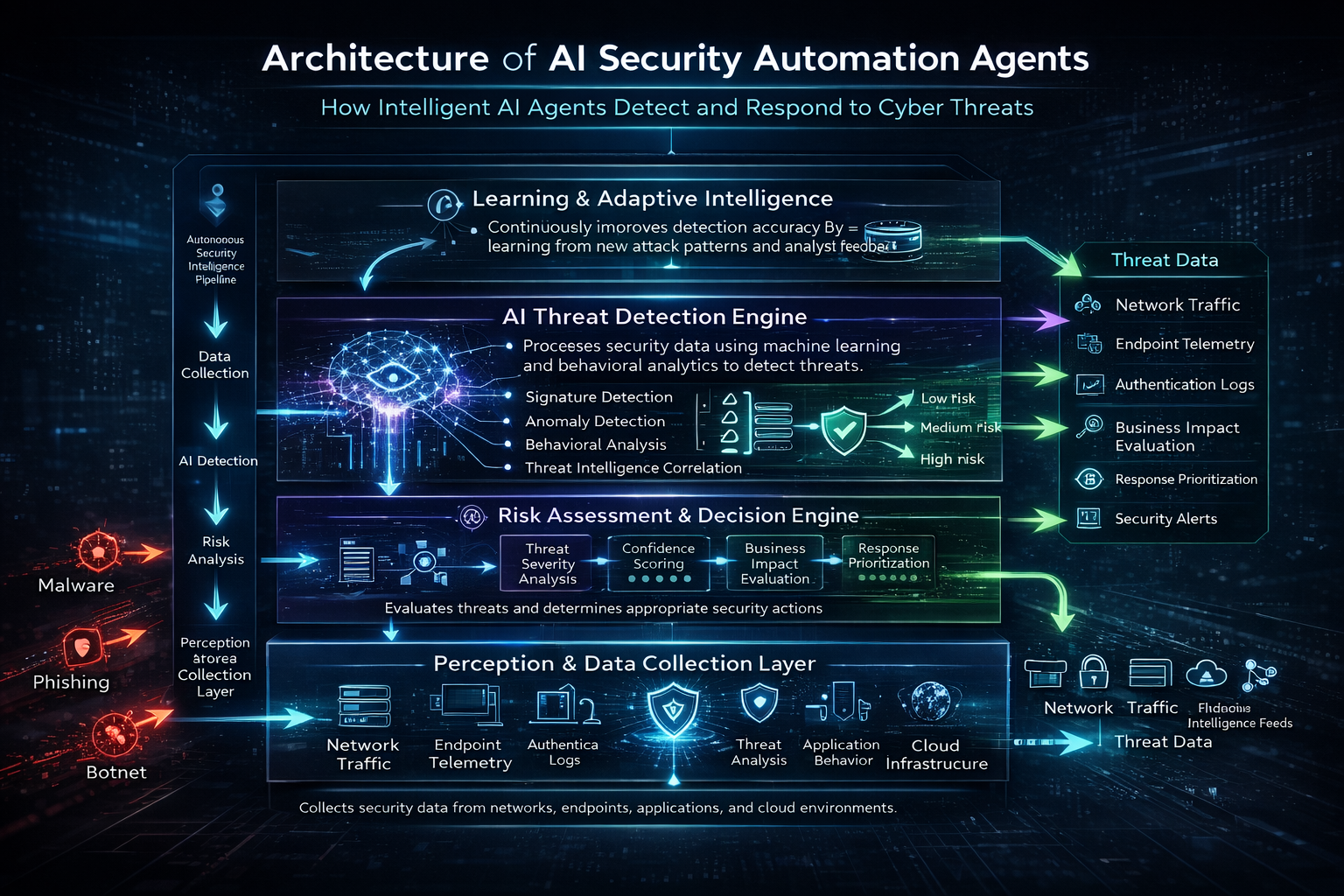

The Architecture of AI Security Automation Agents

Understanding AI security automation agent architecture is essential for developers building or integrating these systems. Modern security agents consist of several interconnected components that work together to provide comprehensive threat protection.

1. Perception and Data Collection Layer

The perception layer serves as the agent’s sensory system, gathering security-relevant data from across the IT environment:

Data sources include:

- Network traffic: Packet captures, flow data, DNS queries, SSL/TLS handshakes

- Endpoint telemetry: Process execution, file modifications, registry changes, memory access patterns

- Authentication logs: Login attempts, privilege escalations, access control events

- Application behaviour: API calls, database queries, user interactions

- Cloud infrastructure: Container activity, serverless function invocations, IAM changes

- Threat intelligence feeds: Known malicious IPs, domains, file hashes, and attack signatures

The challenge isn’t collecting data—modern enterprises generate terabytes daily, but rather filtering signal from noise. Intelligent agents use pre-processing algorithms to focus on security-relevant events while discarding benign activity.

This perception framework aligns with the PEAS model we’ve discussed in our guide on how intelligent agents work , adapted specifically for security contexts.

2. Analysis and Threat Detection Engine

This is the cognitive core where the agent processes perceptual data to identify potential threats. Modern security agents employ multiple detection techniques simultaneously:

Signature-based detection: Matching known attack patterns and malware signatures, fast and accurate for known threats but blind to novel attacks.

Anomaly-based detection: Establishing baselines of normal behaviour and flagging deviations. Machine learning models identify statistical outliers that may indicate:

- Unusual login times or locations

- Abnormal data transfer volumes

- Unexpected process executions

- Atypical network connections

Behavioral analysis: Understanding the context and intent behind actions. For example, a database administrator accessing customer records during business hours is normal; the same action at 3 AM from an unusual location is suspicious.

Threat intelligence correlation: Comparing local observations against global threat databases to identify known malicious infrastructure or attack campaigns.

Predictive analytics: Using historical attack data to forecast likely future threats and proactively strengthen defences.

The most effective security agents use ensemble approaches that combine multiple detection methods, significantly reducing false positives while improving threat coverage. This multi-layered strategy mirrors the hybrid architectures we see in modern AI agent systems .

3. Decision-Making and Risk Assessment Module

Not every anomaly represents a genuine threat. The decision-making module evaluates detected events to determine:

- Threat severity: How dangerous is this activity?

- Confidence level: How certain are we this is malicious?

- Business impact: What systems or data are at risk?

- Response urgency: How quickly must we act?

This risk assessment often employs utility-based reasoning, weighing the potential damage of a threat against the operational impact of defensive actions. For instance, blocking a suspicious IP might stop an attack but could also disrupt legitimate business operations if it’s a false positive.

For deeper insights into utility-based decision-making, see our practical implementation guide for goal-based and utility-based agents .

4. Response and Remediation Layer

Once a threat is confirmed, the action layer executes defensive measures:

Automated responses include:

- Network isolation: Quarantining compromised systems to prevent lateral movement

- Access revocation: Disabling compromised accounts or credentials

- Traffic blocking: Dropping packets from malicious sources at firewalls or endpoints

- Process termination: Killing malicious processes before they can execute payloads

- Evidence preservation: Capturing forensic data for investigation

- Alert escalation: Notifying human analysts for complex threats requiring judgment

The sophistication of automated responses varies based on confidence levels. High-confidence threats (known malware signatures) trigger immediate automated blocking, while ambiguous situations may require human approval before taking disruptive actions.

5. Learning and Adaptation System

The most advanced security agents continuously improve through experience:

- Supervised learning: Security analysts label events as malicious or benign, training models to recognize similar patterns

- Reinforcement learning: Agents receive feedback on response effectiveness, optimizing future actions

- Threat model updates: Incorporating new attack techniques as they emerge

- False positive reduction: Learning which anomalies consistently prove benign in specific environments

This learning capability distinguishes intelligent agents from static rule-based systems, enabling them to adapt to evolving threats without constant manual reconfiguration. This aligns with the broader category of learning agents we’ve explored previously .

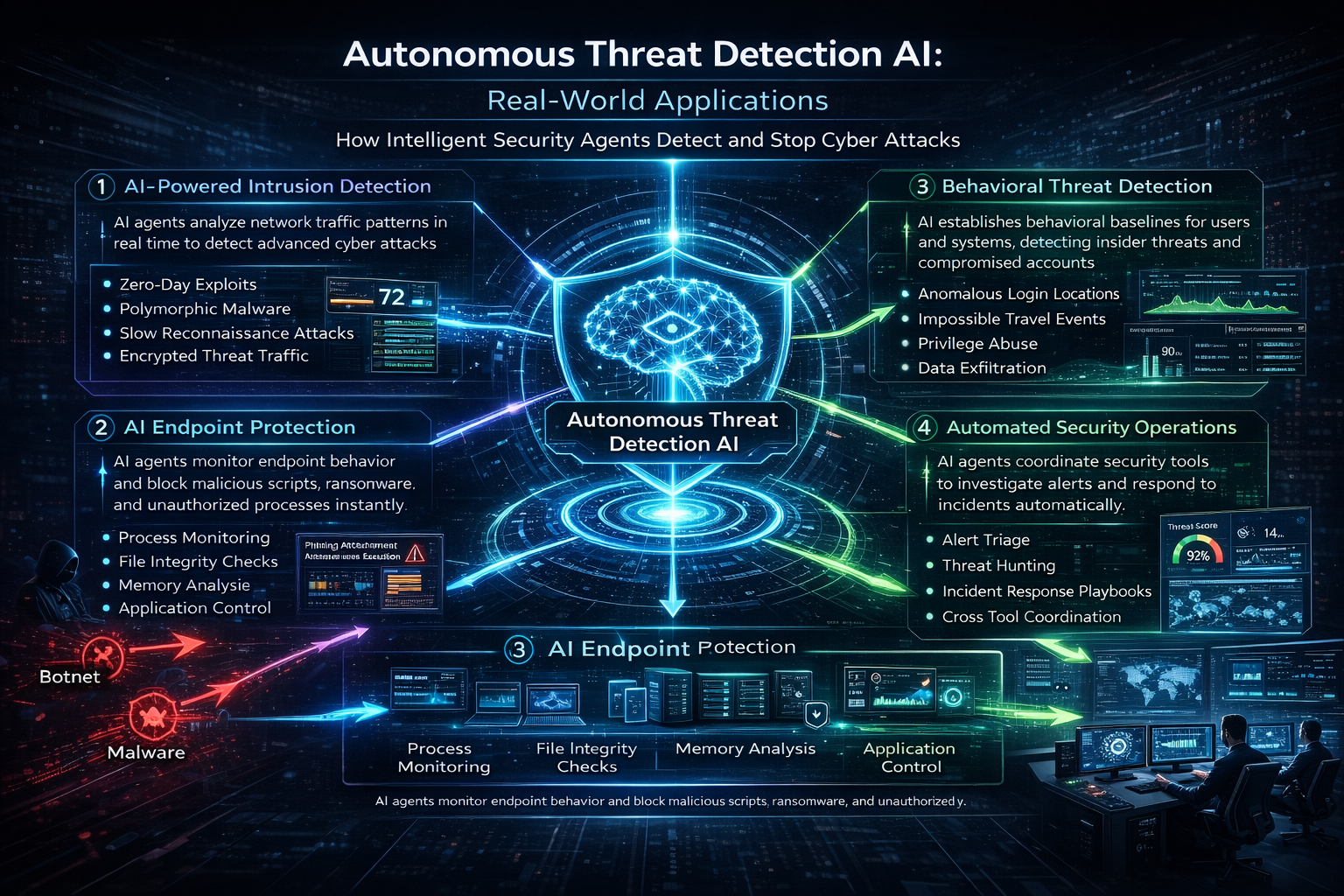

Autonomous Threat Detection AI: Real-World Applications

Autonomous threat detection AI is transforming how organizations defend against cyber attacks across multiple security domains. Let’s explore practical applications where intelligent agents are making measurable impact in 2026.

1. Intelligent Intrusion Detection Systems (IDS)

Traditional intrusion detection systems rely heavily on signature databases and rule sets that require constant manual updates. Intelligent intrusion detection systems powered by AI agents offer significant advantages:

Network-based IDS agents analyze traffic patterns in real-time, identifying:

- Zero-day exploits: Attacks leveraging previously unknown vulnerabilities

- Polymorphic malware: Threats that change their code to evade signature detection

- Low-and-slow attacks: Stealthy reconnaissance spread over weeks or months

- Encrypted threat traffic: Malicious activity hidden within SSL/TLS connections

Example implementation:

An AI agent monitoring network traffic notices an unusual pattern: a workstation is making DNS queries for domains with algorithmically generated names and a hallmark of malware using domain generation algorithms (DGAs) for command-and-control communication. The agent:

- Correlates this activity with threat intelligence feeds

- Identifies the workstation as likely compromised

- Automatically isolates the system from the network

- Captures memory and disk forensics

- Alerts the security operations centre (SOC) with full context

All of this happens in seconds, before the malware can exfiltrate data or spread laterally.

2. Endpoint Detection and Response (EDR)

EDR agents operate directly on endpoints (laptops, servers, mobile devices), providing visibility into system-level activity:

- Process behaviour monitoring: Detecting malicious process injection, privilege escalation, or credential dumping

- File integrity monitoring: Identifying unauthorized modifications to critical system files

- Memory analysis: Detecting fileless malware that operates entirely in RAM

- Application control: Preventing unauthorized software execution

Real-world scenario:

An employee receives a phishing email with a malicious attachment. When opened, the document attempts to execute a PowerShell script that downloads ransomware. The EDR agent:

- Recognizes the unusual PowerShell execution pattern

- Blocks the script before it can download the payload

- Quarantines the malicious document

- Notifies the user and security team

- Initiates a scan of other systems for similar indicators

The attack is stopped at the initial access stage, preventing what could have been a devastating ransomware incident.

3. User and Entity Behaviour Analytics (UEBA)

UEBA agents establish behavioural baselines for users and systems, detecting insider threats and compromised credentials:

- Anomalous access patterns: Users accessing data outside their normal scope

- Impossible travel: Login events from geographically distant locations within impossible timeframes

- Privilege abuse: Administrators using elevated permissions for unusual activities

- Data exfiltration: Unusual volumes of data being copied or transmitted

Case study:

A financial services company deploys a UEBA agent that learns normal patterns for each employee. One day, a marketing manager’s account begins accessing customer financial records and something this user has never done before. The agent:

- Flags this as highly anomalous behaviour

- Requires additional authentication (step-up verification)

- Notifies the security team of potential account compromise

- Temporarily restricts access to sensitive systems

Investigation reveals the account was compromised through credential stuffing. The agent’s early detection prevented unauthorized access to customer data, avoiding regulatory penalties and reputational damage.

4. Security Orchestration, Automation, and Response (SOAR)

SOAR platforms use intelligent agents to coordinate multiple security tools and automate incident response workflows:

- Alert triage: Automatically investigating and prioritizing security alerts

- Threat hunting: Proactively searching for indicators of compromise across the environment

- Incident response playbooks: Executing standardized response procedures automatically

- Cross-tool coordination: Orchestrating actions across firewalls, EDR, SIEM, and other security tools

Operational impact:

A large enterprise receives 10,000 security alerts daily. Before implementing SOAR agents, analysts spent 80% of their time on alert triage, investigating false positives. With intelligent agents handling initial investigation and filtering, analysts now focus on the 2-3% of alerts representing genuine threats and dramatically improving response effectiveness.

For more examples of intelligent agents solving real-world problems, check out our article on real-world applications across industries .

AI Agents vs Traditional Security Tools: Key Advantages

Why are organizations rapidly adopting AI agents in security over traditional tools? The advantages are compelling:

1. Speed and Scale

Human analysts can investigate perhaps 50-100 alerts per day.AI agents can analyze millions of events per second, identifying threats that would be impossible to detect manually.

In cybersecurity, speed is everything. The average time from initial compromise to data exfiltration is measured in hours. Automated agents respond in milliseconds, often stopping attacks before they progress beyond initial access.

2. Consistency and Reliability

Human analysts have bad days, get tired, and make mistakes. AI agents maintain consistent vigilance 24/7/365, never missing a shift or overlooking a critical alert due to fatigue.

3. Handling Complexity

Modern cyber attacks involve multiple stages across diverse systems and initial phishing, lateral movement through the network, privilege escalation, data exfiltration. Correlating these distributed events requires analyzing data from dozens of sources simultaneously and a task where AI agents excel.

4. Adaptive Learning

Traditional security tools require manual updates to detect new threats. Intelligent agents learn from each attack attempt, continuously improving detection accuracy without constant human intervention.

5. Reducing Alert Fatigue

Security teams suffer from “alert fatigue” and so many false positives that genuine threats get overlooked. AI agents dramatically reduce false positives through contextual analysis, ensuring analysts focus on real threats.

This represents a fundamental shift from reactive security (responding to known threats) to proactive defence (predicting and preventing novel attacks) and a distinction we’ve explored in our comparison of AI vs intelligent agents .

Types of Security Agents and Their Use Cases

Different security challenges require different agent architectures. Understanding these distinctions helps you select the right approach for your specific needs.

1. Reactive Security Agents

Reactive agents respond immediately to specific triggers without maintaining historical context:

Use cases:

- Firewall rules: Blocking traffic from known malicious IPs

- Signature-based antivirus: Quarantining files matching malware signatures

- Automated patching: Applying security updates when vulnerabilities are announced

Advantages: Extremely fast, low computational overhead, highly predictable

Limitations: Only effective against known threats, cannot detect novel attack patterns

2. Model-Based Security Agents

These agents maintain internal models of normal system behaviour, enabling detection of deviations:

Use cases:

- Anomaly detection: Identifying unusual network traffic or user behaviour

- Baseline monitoring: Detecting configuration changes or unauthorized software installations

- Compliance monitoring: Ensuring systems remain in approved states

Advantages: Can detect unknown threats, adapts to environment-specific normal behaviour

Limitations: Requires training period to establish baselines, may generate false positives during legitimate changes

3. Goal-Based Security Agents

Goal-based agents plan sequences of actions to achieve security objectives:

Use cases:

- Threat hunting: Proactively searching for indicators of compromise

- Incident investigation: Automatically gathering evidence and reconstructing attack timelines

- Vulnerability management: Prioritizing and remediating security weaknesses based on risk

Advantages: Can handle complex, multi-step security tasks autonomously

Limitations: Higher computational requirements, may require more sophisticated implementation

4. Utility-Based Security Agents

When security decisions involve trade-offs, utility-based agents evaluate options based on overall value:

Use cases:

- Risk-based authentication: Requiring additional verification based on login context

- Automated response decisions: Balancing threat containment against operational disruption

- Resource allocation: Distributing security monitoring capacity across critical assets

Advantages: Makes nuanced decisions considering multiple factors

Limitations: Requires careful tuning of utility functions to match organizational priorities

5. Learning Security Agents

Learning agents continuously improve through experience:

Use cases:

- Adaptive threat detection: Improving accuracy by learning from analyst feedback

- Phishing detection: Recognizing increasingly sophisticated social engineering attempts

- Malware classification: Identifying new malware variants based on behavioural similarities

Advantages: Becomes more effective over time, adapts to evolving threats

Limitations: Requires quality training data, potential for adversarial manipulation

For more on implementing different agent types, see our practical guide to goal-based, utility-based, and learning agents .

Challenges and Considerations for Implementing Security Agents

While intelligent agents in cybersecurity offer tremendous benefits, several challenges must be addressed for successful implementation.

1. Adversarial Machine Learning

Attackers are developing techniques to evade or manipulate AI-based detection systems:

- Model poisoning: Injecting malicious training data to corrupt learning algorithms

- Evasion attacks: Crafting malware that exploits blind spots in AI models

- Adversarial examples: Slightly modified inputs that cause misclassification

Mitigation strategies:

- Implement ensemble models that are harder to evade

- Use adversarial training to improve robustness

- Maintain human oversight for critical decisions

- Regularly test agents against adversarial techniques

2. False Positives and Alert Fatigue

Even advanced AI agents generate false positives. Too many false alarms lead to alert fatigue, where analysts ignore warnings and potentially missing genuine threats.

Best practices:

- Tune detection thresholds based on organizational risk tolerance

- Implement confidence scoring to prioritize high-certainty alerts

- Provide rich context with each alert to facilitate rapid investigation

- Continuously refine models based on analyst feedback

3. Explainability and Trust

Security teams need to understand why an agent flagged something as malicious. “Black box” AI decisions without explanation erode trust and make investigation difficult.

Solutions:

- Implement explainable AI techniques that articulate reasoning

- Provide detailed evidence supporting each detection

- Allow analysts to query agents about decision factors

- Maintain audit trails of agent actions for accountability

This challenge mirrors broader concerns about AI transparency we’ve discussed in our article on intelligent agents vs machine learning vs deep learning .

4. Data Privacy and Compliance

Security monitoring involves collecting and analyzing sensitive data, raising privacy concerns:

- Regulatory compliance: GDPR, CCPA, and other regulations restrict data collection and processing

- Employee privacy: Monitoring user behaviour must balance security with privacy rights

- Data retention: How long should security data be stored?

Compliance strategies:

- Implement privacy-preserving techniques like differential privacy

- Clearly define data collection scope and retention policies

- Ensure security agents operate within legal and ethical boundaries

- Conduct regular privacy impact assessments

5. Integration Complexity

Enterprise environments include dozens of security tools from different vendors. Integrating intelligent agents across this heterogeneous landscape is challenging:

Integration considerations:

- Use standardized APIs and data formats (STIX, TAXII, OpenC2)

- Implement security orchestration platforms to coordinate multiple tools

- Ensure agents can consume and produce data in common formats

- Plan for ongoing maintenance as tools and agents evolve

For insights on frameworks that facilitate integration, explore our guide to top agentic AI frameworks in 2026 .

The Future of Intelligent Agents in Cybersecurity

As we progress through 2026, several trends are shaping the evolution of AI-driven security:

1. Autonomous Security Operations Centers (SOCs)

The vision of fully autonomous SOCs and where AI agents handle the majority of security operations with minimal human intervention and is becoming reality. Analysts transition from alert triage to strategic threat hunting and security architecture design.

2. Predictive Threat Intelligence

Beyond detecting current attacks, future agents will predict likely threats based on:

- Geopolitical events affecting threat actor motivation

- Vulnerability disclosure patterns

- Dark web intelligence about planned campaigns

- Industry-specific threat trends

This predictive capability enables proactive defence before attacks materialize.

3. Collaborative Multi-Agent Systems

Rather than isolated agents, future security architectures will feature coordinated agent teams:

- Network agents monitoring traffic patterns

- Endpoint agents tracking system behaviour

- Cloud agents securing infrastructure

- Application agents protecting software

These agents share intelligence and coordinate responses, providing defence-in-depth through collaboration.

4. Quantum-Resistant Security Agents

As quantum computing advances, current encryption methods face obsolescence. Future security agents will need to:

- Detect quantum-based attacks

- Implement post-quantum cryptography

- Transition systems to quantum-resistant algorithms

5. Human-Agent Teaming

The future isn’t fully autonomous security and it’s effective collaboration between human expertise and AI capabilities. Agents handle volume and speed; humans provide judgment, creativity, and ethical oversight.

This collaborative model represents the practical application of concepts we’ve explored in modern AI agent systems

.

.

Practical Implementation: Building Security Agents

If you’re ready to implement AI security automation agents, here’s a practical roadmap:

Step 1: Define Security Objectives

Start with specific security challenges:

- What threats are you most concerned about?

- Where are your current detection gaps?

- What response actions would provide the most value?

Step 2: Assess Your Data Foundation

Intelligent agents require quality data:

- Coverage: Are you collecting logs from all critical systems?

- Quality: Is data normalized and enriched?

- Retention: Do you maintain sufficient historical data for baseline establishment?

- Access: Can agents query data sources in real-time?

Step 3: Choose the Right Agent Architecture

Match agent type to security challenge:

- Known threats → Reactive agents

- Anomaly detection → Model-based agents

- Complex investigations → Goal-based agents

- Risk-based decisions → Utility-based agents

- Evolving threats → Learning agents

Step 4: Start with High-Value Use Cases

Begin with scenarios offering clear ROI:

- Alert triage: Reduce analyst workload

- Phishing detection: Protect against common attack vector

- Anomaly detection: Identify insider threats and compromised accounts

Step 5: Implement Human-in-the-Loop Initially

Don’t grant full autonomy immediately:

- Start with agents recommending actions for human approval

- Gradually increase autonomy as confidence grows

- Maintain human oversight for high-impact decisions

Step 6: Measure and Iterate

Track key metrics:

- Detection rate: Percentage of threats identified

- False positive rate: Benign events incorrectly flagged

- Response time: Speed from detection to containment

- Analyst efficiency: Time saved through automation

Use these metrics to continuously refine agent performance.

For more on building autonomous systems, see our collection of real-world intelligent agent examples .

Conclusion

Intelligent agents in cybersecurity represent a fundamental shift in how organizations defend against cyber threats. By combining continuous monitoring, advanced analytics, autonomous decision-making, and adaptive learning, AI agents in security provide capabilities that human analysts and traditional tools simply cannot match.

From intelligent intrusion detection systems that identify zero-day exploits to autonomous threat detection AI that responds to attacks in milliseconds, these agents are becoming indispensable components of modern security architectures. The AI security automation agents we’ve explored and spanning network security, endpoint protection, behavioural analytics, and security orchestration and demonstrate the breadth of applications transforming the cybersecurity landscape in 2026.

However, success requires more than just deploying technology. Effective implementation demands quality data foundations, careful agent architecture selection, ongoing tuning to reduce false positives, and thoughtful human-agent collaboration. Organizations must also address challenges around adversarial machine learning, explainability, privacy compliance, and integration complexity.

The future of cybersecurity isn’t about replacing human security professionals and it’s about augmenting human expertise with AI capabilities that handle volume, speed, and complexity at scales impossible for humans alone. As threats continue to evolve, intelligent agents will become not just advantageous but essential for maintaining effective defences.

Ready to explore more about intelligent agents? Check out our comprehensive guides on applications across healthcare, finance, and e-commerce and discover what intelligent agents are and how they work .

Are you implementing intelligent agents in your security operations? What challenges have you encountered? Share your experiences in the comments below!

Hi, I’m Pragya.

I write about AI tools, digital trends, and emerging technologies in a way that’s simple, practical, and easy to apply. I enjoy exploring new AI platforms, testing their features, and breaking them down into clear guides that actually help people use them confidently.

My focus is not just on writing content, but on creating value. I believe powerful technology should feel accessible, not overwhelming. That’s why I aim to turn complex tools into actionable insights for creators, marketers, and growing online businesses.

I’m constantly learning, researching, and staying updated with the fast-moving AI space so readers always get relevant and useful information.