If you’ve ever wondered how self-driving cars navigate complex traffic or how medical diagnosis systems make accurate predictions, you’re looking at intelligent agents in action. These autonomous systems are revolutionizing industries, with the AI agents market projected to reach $50.31 billion by 2030, growing at a staggering 45.8% annually.

- What Are Intelligent Agents?

- Understanding PEAS in Artificial Intelligence

- Performance Measure: Defining Success

- Environment: The Agent’s Operating Context

- Actuators: Taking Action

- Sensors: Perceiving the World

- Real-World PEAS Examples for Developers

- Decision-Making in Intelligent Agents

- Implementing Intelligent Agents: Developer Considerations

- 1. Workflow vs. Agent Architecture

- 2. Data Pipeline Design

- 3. Model Selection and Integration

- 4. Observability and Control

- Challenges and Future Directions

- Conclusion

But how intelligent agents work goes far beyond simple automation. Understanding the architecture, decision-making processes, and environmental interactions of these systems is crucial for developers building next-generation AI applications.

In this comprehensive guide, we’ll break down the PEAS framework, explore different agent environments, and explore the decision-making mechanisms that power intelligent agents. Whether you’re building autonomous systems or simply want to understand AI architecture better, this article will give you the foundational knowledge you need.

What Are Intelligent Agents?

An intelligent agent is a software entity that perceives its environment through sensors, processes information, and takes actions through actuators to achieve specific goals. Unlike traditional programs that follow rigid instructions, intelligent agents exhibit three key characteristics:

- Perception– Gathering information about their environment through sensors

- Decision-Making– Processing information and determining optimal actions

- Action– Executing decisions through actuators to influence the environment

Think of a thermostat as a simple intelligent agent. It perceives temperature through sensors, decides whether heating or cooling is needed based on the target temperature, and acts by turning the HVAC system on or off.

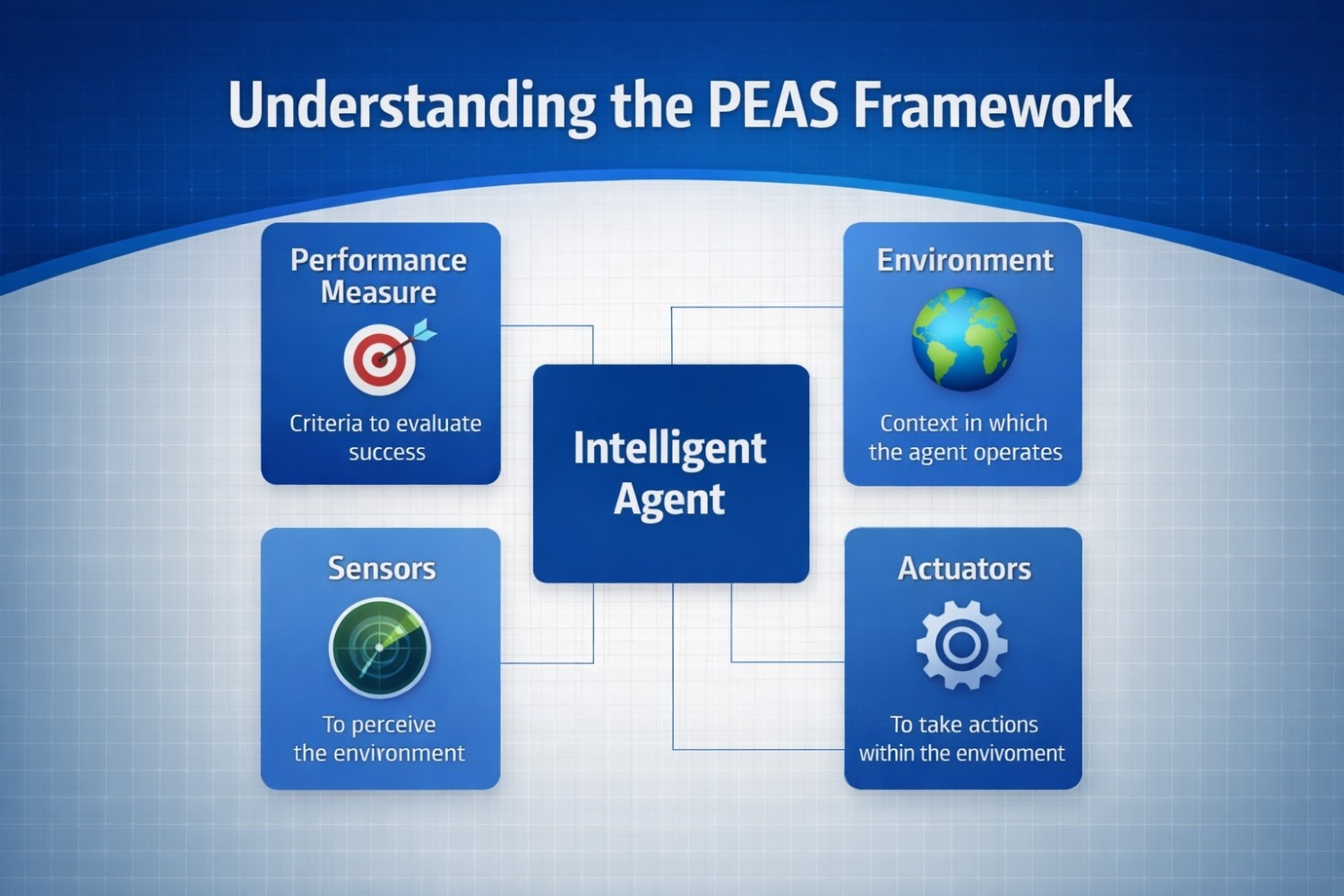

Understanding PEAS in Artificial Intelligence

The PEAS frameworks the cornerstone for designing and analyzing intelligent agents. PEAS stands for Performance measure, Environment, Actuators, and Sensors– four interconnected components that comprehensively define how an AI system operates.

Performance Measure: Defining Success

The performance measure establishes the criteria for evaluating an agent’s success. It’s the objective function the agent optimizes, and it must be specific, measurable, and aligned with real-world goals.

Examples:

- Self-driving car: Safe navigation, fuel efficiency, passenger comfort, arrival time

- Medical diagnosis AI: Diagnostic accuracy, minimizing false negatives, processing speed

- E-commerce recommendation system: Click-through rates, conversion rates, user satisfaction

For developers, defining clear performance measures is critical. A poorly defined metric can lead agents to optimize for the wrong objectives – a phenomenon known as “reward hacking” in reinforcement learning.

Environment: The Agent’s Operating Context

The agent environment in AI encompasses all external factors influencing agent behaviour. Understanding environmental characteristics is essential because they dictate the challenges your agent will face and the strategies it must employ.

Types of AI Environments

- Fully Observable vs. Partially Observable

In a fully observable environment, the agent has complete knowledge of the world state at each point in time. Chess is fully observable – you can see all pieces and their positions. In contrast, autonomous vehicles operate in partially observable environments where objects can be hidden from view (around corners, behind other vehicles).

- Deterministic vs. Stochastic

A deterministic environment produces predictable outcomes for every action. In chess, moving a piece has a known result. Stochastic environments involve randomness – financial markets, weather conditions, or user behaviour all introduce unpredictability that agents must handle through probabilistic reasoning.

- Static vs. Dynamic

Static environments remain unchanged while the agent deliberates (like a crossword puzzle). Dynamic environments constantly evolve – video games with moving opponents, stock markets, or traffic conditions all require real-time adaptation.

- Discrete vs. Continuous

Discrete environments have finite states and actions (board games), while continuous environments have infinite ranges (robotic arm control, autonomous driving).

- Episodic vs. Sequential

In episodic environments, each action is independent (spam email classification). Sequential environments require considering how current actions affect future states (chess, autonomous navigation).

Actuators: Taking Action

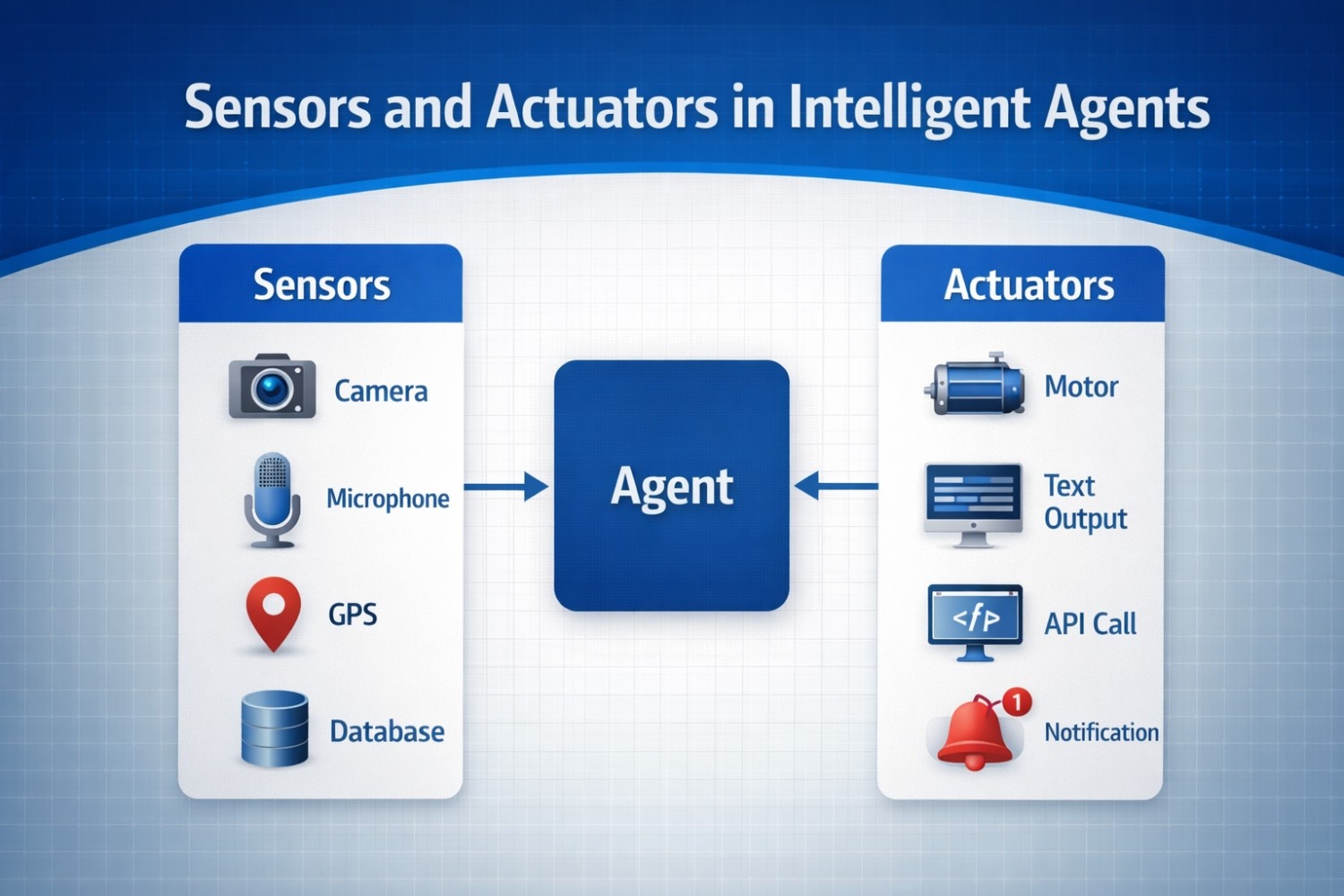

Actuators are the mechanisms enabling agents to influence their environment. These range from physical components to digital outputs:

- Physical actuators: Robotic arms, motors, steering systems, braking mechanisms

- Digital actuators: Text generation, screen displays, API calls, database updates

- Communication actuators: Email notifications, alerts, recommendations

Sensors: Perceiving the World

Sensors provide agents with environmental perception capabilities. The quality, coverage, and reliability of sensors fundamentally limit agent understanding and performance.

Common sensor types:

- Visual sensors: Cameras, LiDAR, thermal imaging

- Audio sensors: Microphones for speech recognition

- Data sensors: GPS, accelerometers, temperature sensors

- Digital sensors: User input, database queries, API responses

Real-World PEAS Examples for Developers

Example 1: Autonomous Vehicle

Performance Measure: Safe navigation, efficient routing, passenger comfort, regulatory compliance, fuel efficiency

Environment: Roads, traffic patterns, pedestrians, weather conditions, traffic signals, other vehicles (dynamic, stochastic, continuous, sequential, partially observable)

Actuators: Steering system, acceleration control, braking system, turn signals, horn

Sensors: Cameras, LiDAR, radar, GPS, ultrasonic sensors, accelerometers

Example 2: Medical Diagnosis AI

Performance Measure: Diagnostic accuracy, minimizing false negatives, processing speed, explanation quality

Environment: Patient records, medical imaging, lab results, medical knowledge databases (static, partially observable, episodic)

Actuators: Diagnostic reports, treatment recommendations, alert notifications, confidence scores

Sensors: Electronic health records, X-rays, MRI scans, CT scans, patient monitoring devices

Example 3: Customer Service Chatbot

Performance Measure: Response accuracy, resolution time, customer satisfaction, escalation rate

Environment: User queries, knowledge base, customer history, product information (dynamic, partially observable, sequential)

Actuators: Text responses, ticket creation, human agent escalation, knowledge base updates

Sensors: Text input, conversation history, customer profile data, sentiment analysis

Decision-Making in Intelligent Agents

Understanding decision making in intelligent agents is crucial for building effective AI systems. Modern agents employ sophisticated architectures that combine perception, reasoning, and action.

Types of Intelligent Agents by Decision-Making Capability

- Simple Reflex Agents

These agents operate on condition-action rules without considering history. A thermostat is a simple reflex agent – if temperature < threshold, turn on heat.

- Model-Based Reflex Agents

These maintain an internal model of the world, tracking current state and understanding how past interactions impact the environment. A robot navigating a room remembers obstacle locations.

- Goal-Based Agents

Goal-based agents make decisions by evaluating which actions lead to desired goal states. They can plan ahead and consider future consequences of current actions.

- Utility-Based Agents

These agents maximize a utility function, making trade-offs between competing objectives. A delivery drone might balance speed, fuel efficiency, and safety.

- Learning Agents

Learning agents improve performance over time through experience. They consist of four components:

- Performance element: Determines actions based on knowledge

- Learning element: Improves knowledge based on feedback

- Critic: Evaluates actions and provides feedback

- Problem generator: Suggests exploratory actions

Curious how these agent types actually work behind the scenes? We’ve broken each one down in detail in a separate guide with real examples, practical explanations, and deeper insights. If you want to truly understand how decision-making evolves from simple rules to learning systems, that’s the next read.

The Agent Decision Loop

Modern intelligent agents operate through a continuous perception-decision-action loop:

- PERCEIVE → Gather environmental data through sensors

2. PROCESS → Analyze data, update internal state

3. DECIDE → Determine optimal action based on goals

4. ACT → Execute action through actuators

5. LEARN → Update knowledge based on outcomes. This loop enables agents to adapt to changing conditions and improve performance over time.

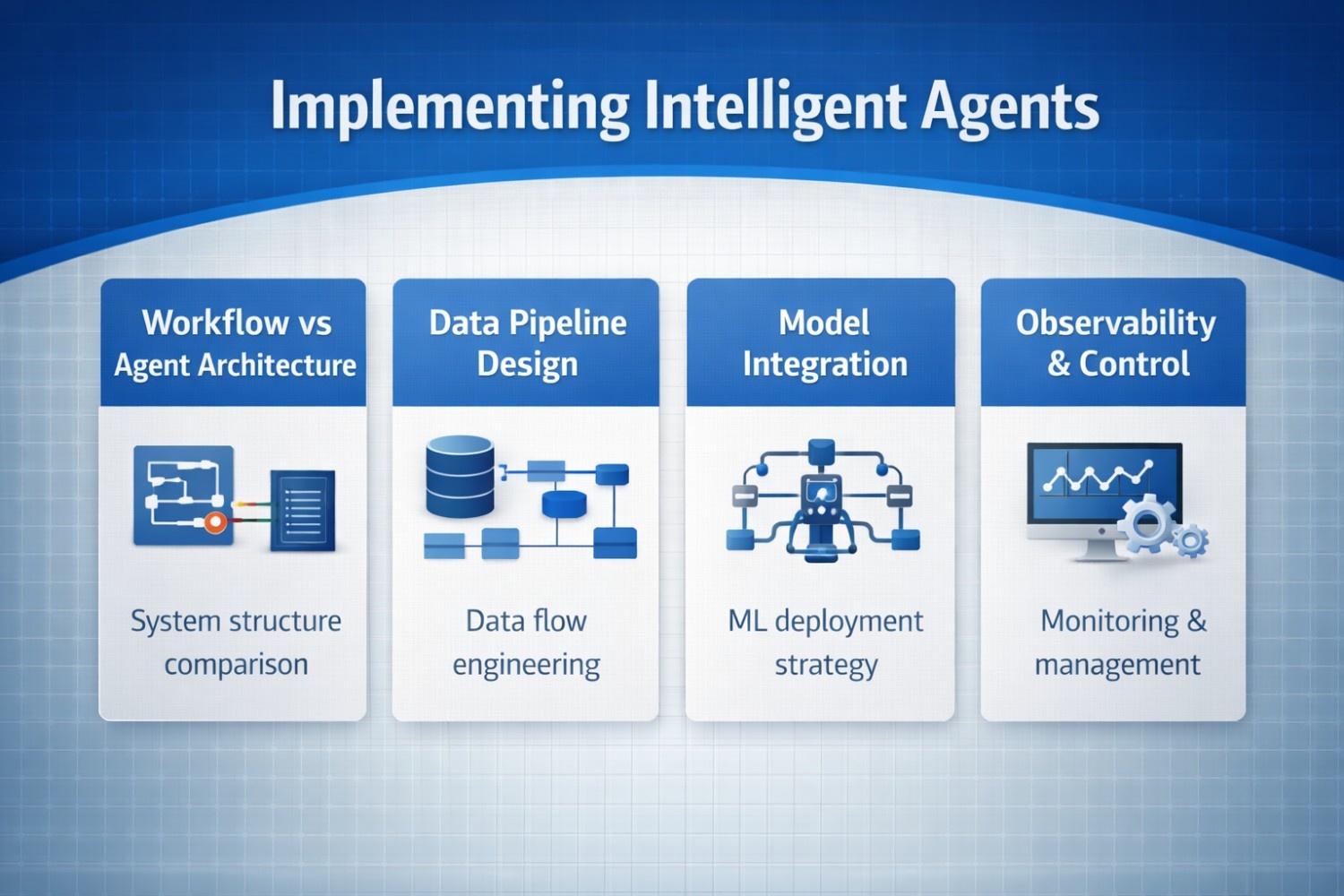

Implementing Intelligent Agents: Developer Considerations

When building intelligent agents, developers must consider several architectural decisions:

1. Workflow vs. Agent Architecture

Deterministic workflows provide predictable execution paths with explicit error handling – ideal for well-defined business processes. Agentic architectures enable dynamic tool selection and adaptive reasoning but introduce complexity and unpredictability.

2. Data Pipeline Design

Your agent is only as good as its data. Implement robust validation, transformation logic, and intelligent caching strategies. According to industry research, over half of AI development projects struggle with unclear objectives and poorly defined success metrics.

3. Model Selection and Integration

Choose models appropriate for your use case. Combine large language models with specialized tools and knowledge sources through techniques like:

- Retrieval-Augmented Generation (RAG): Integrating external knowledge sources

- Chain-of-thought prompting: Encouraging step-by-step reasoning

- Tool use frameworks: Enabling agents to invoke external APIs and services

4. Observability and Control

Nearly 95% of executives have experienced AI-related mishaps, yet only 2% of organizations meet responsible AI standards. Implement:

- Tracing and observability tools

- Guardrails to prevent undesired behavior

- Human oversight mechanisms

- Audit trails for accountability

Challenges and Future Directions

While intelligent agents offer tremendous potential, developers must navigate several challenges:

Complexity in Dynamic Environments: Real-world scenarios often exceed the simplicity of PEAS components, requiring consideration of multi-agent interactions, emergent behaviors, and long-term planning.

Ethical Considerations: Only 54% of U.S. consumers believe organizations have policies for responsible AI use. Developers must implement ethical guardrails and ensure transparency.

Integration Challenges: Translating PEAS specifications into working systems requires substantial technical expertise, bridging the gap between abstract definitions and concrete implementations.

Conclusion

Understanding how intelligent agents work through the PEAS framework, environment types, and decision-making architectures is essential for building effective AI systems. The PEAS in artificial intelligence provides a structured approach to agent design, while understanding agent environment in AI helps anticipate challenges and design robust solutions.

As the AI agents market continues its explosive growth, developers who master these foundational concepts will be well-positioned to build the next generation of autonomous systems. Whether you’re working on autonomous vehicles, medical diagnosis systems, or customer service bots, the principles of performance measure environment actuators sensors and decision making in intelligent agents remain constant.

Start by clearly defining your agent’s performance measures, thoroughly analyzing the environment, selecting appropriate actuators and sensors, and implementing decision-making architectures that balance autonomy with control. The future of AI is agentic and it’s being built by developers who understand these core principles.

Hi, I’m Pragya.

I write about AI tools, digital trends, and emerging technologies in a way that’s simple, practical, and easy to apply. I enjoy exploring new AI platforms, testing their features, and breaking them down into clear guides that actually help people use them confidently.

My focus is not just on writing content, but on creating value. I believe powerful technology should feel accessible, not overwhelming. That’s why I aim to turn complex tools into actionable insights for creators, marketers, and growing online businesses.

I’m constantly learning, researching, and staying updated with the fast-moving AI space so readers always get relevant and useful information.